Five months ago, Salesforce completed its acquisition of API pioneer MuleSoft for a cool $6.5 billion, saying it would “enable all enterprises to surface any data – regardless of where it resides – to drive deep and intelligent customer experiences throughout a personalized 1:1 journey.”

For those allergic to marketing guff, it’s just the first part of that sentence that matters; but matter it does: from retail to finance, many businesses still find it a formidable challenge to access the data they need, let alone use it regularly to generate actionable business intelligence.

For MuleSoft founder Ross Mason, it’s a challenge he has been grappling with ever since he found himself tasked with connecting legacy trading and ledger systems in a City investment bank over a decade ago; something he describes with a grimace as an “awful” experience.

Joining Computer Business Review’s editor Ed Targett in the company’s London offices, he talked through life since the sale, the IT-business divide that remains so prevalent in many businesses – and painted a vivid picture of ongoing data accessibility challenges in many firms.

What Inspired Mulesoft’s Creation?

What Inspired Mulesoft’s Creation?

It’s really, really hard to connect stuff together. If you’re not in software, it’s hard to understand why it’s so difficult. It’s just awful, because there’s never been any standardisation. The challenge often comes down to being able to connect two things that were written 40 years ago by some guy who’s probably retired now.

I was working in investment banking at that time. We were connecting legacy trading and ledger systems.

I had three teams of people doing integration. One was using files. Dropping a file somewhere and picking it up somewhere else. Someone else was doing what was called enterprise application integration, which is very heavy connectivity things like SAP.

And then, somebody else was playing around with this newer technology, at the time, a web service. And, none of them could even speak the same language to each other. I thought it was insane.

We were creating an architecture connecting seven systems, which, by the way, now seems like a really small number. (We tend now to look at tens or hundreds.) About 12 teams in total were involved.

The project cost €30 million and it took almost two years to complete. And, it was considered a success. When I came out, I’m like: “If that’s a success, then there’s just a tonne of room for growth.”

How Did You Feel About Selling Your Company – and How’s Life Under the ‘New Overlords’?

I loved being a public company. I had a bit of a lifelong dream to found a company and then go public. That was awesome. And I loved that period. But if you’re going to get acquired, for us Salesforce was the best acquirer, because they’re leading the charge and taking our process to the Cloud, which, again, was a big driver for our business.

Interestingly, we’re the first acquisition where they’re keeping us quite separate. So, our exec team, and then product, field, and marketing, are independent. We sell quite differently to the way they sell their products.

They sell to the business: The business is buying functionality, and vision, and how to get there. We’re much more about IT transformation. So, they’re good at connecting customers to your business. We’re very good at connecting your data to your business. Generally one is owned by the functional leaders in business, the other’s owned by the CIO…

This Makes Sense Here, But You’ve Mentioned Issues with a Business/IT Division at an Operational Level.

As a Company That Does a Lot of Work Helping Businesses Improve Access to Data. Can You Talk About Some of the Challenges You Encounter?

Often the biggest challenge is a cultural shift. IT is often still considered a cost center.

If you think about it, it’s really odd, especially in this day and age. IT own the most persistent, valuable, asset of the organisation, which is the data.

Yet there has been no progress in figuring out how to unlock that gold mine of data in ways that enable… other people in the organisation to use it in a way that makes sense to them.

See also: Public Cloud API Security Risks: Fact or Fiction?

This is the big problem, I think, with separating IT in the business. IT makes IT decisions. Business make business decisions, based on customer acquisition and engagement. IT makes decisions based on what they can run and manage.

And they haven’t really been talking for 20 years

Business asks for something, they create a project, they scope the project, they deliver the project, and on. And, nothing in that process is reused the next time. You need to create a reusable building block so you can get access to it, and so can anyone else who winds up needing that same customer information.

Could You Provide A Real Example?

Sure. We worked with a company who have a pretty good data warehouse. They’ve managed to consolidate their product catalogue into one warehouse.

But every time you needed to access that information, you went to the data warehouse team, you told them what data you wanted, and they’d scope a project for you.

Then, they’d find a specialist to go and connect into that warehouse. And, it would take about 20 weeks…

Twenty Weeks? Is That Common?

Yes, because you’re trying to find people, you’re scheduling work.

And, because it takes so long, what happens is the business says: “It’s a nightmare getting data out, so I’m gonna talk to other people who also want data.”

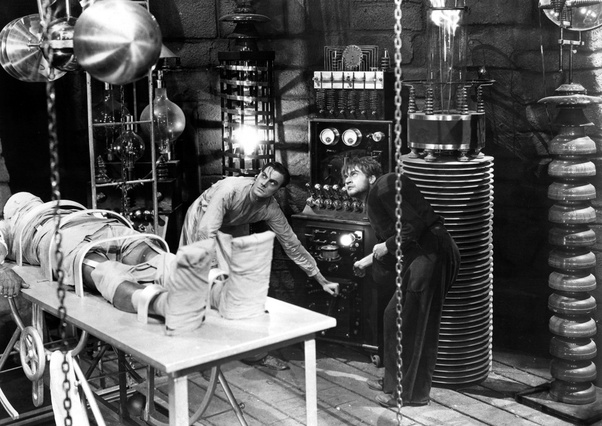

They start putting new data requests in the same project. What that does, it creates Frankenstein data sets. It’s stuff that’s useful for this small group of people that agree that we’re gonna get this data out, but not for anyone else.

So here’s what happens (and this is insane): They have a big data warehouse. They take out the data set, they clean it up for this particular use case, they put it into a database. They create all these rules for moving that data set into that database once a week or once a day. And, they run it.

So, what they’ve basically done is create a duplicate of the data, of that particular data set. They’ve only cleaned it here. They haven’t cleaned it in the data warehouse because that’s too big of a problem. And, they’ve created the need for a new database they’ve gotta run, and an ETL [extract, transform, load] integration script that runs nightly.

This company runs 500 IT projects a year, of which about 200 of them need this type of data. So, potentially they’re gonna create 200 new databases and scripts to get data out. You wouldn’t believe this is the way you do it. But, it is because in the old days, you couldn’t connect things to their warehouse. And this is still common.

Presumably There’s a Better Way…

Presumably There’s a Better Way…

What we did in this instance was say: “Why don’t we create read-only APIs for top-level requests to the data warehouse.” So, read-only is just “This is what a product looked like”/”This is what a GO looks like”/”This is what an item looks like.”

We’ll just create those as read-only APIs. And then, we’ll put those APIs into a data marketplace. And, that marketplace will just describe what the API data set gives you. And then we’ll provide, simple instructions on how to plug that into Excel, Tableau, etc.

By doing so, we did two things: we stopped the craziness of creating lots of data sets. But, we also enabled reuse and self-service. Because people can now search for data that they want.

If they don’t find what they needed, we created a little API SWAT team. They take a request for a particular data set, and they go an implement it within two weeks, instead of 20 weeks. And then they plug that into the data marketplace.

That starts to change the dynamic. It stops the business from creating Frankenstein data sets and it enables self-service because we allowed them to then plant these data sets into their tools. It doesn’t solve everything. But, it enables all the data analysts who need access to this base information to go and get their job done.

What Are You Working on Now?

We’ve got this concept of an application network which is like – I don’t want to make it sound too grand – but like a new internet. If you think internet at one level is about connecting wires and the data bits, we’re connecting the things that sit on top of that, actually the data they’re moving between those applications.

[This is important when it comes to the] AI market. I’ve watched people create this big engine. You put data in there, and you run algorithms across it. And, when you come to the point of insight, there’s no way to effect the change. It’s like a brain in the jar. There’s no nervous system connected to the rest of the business that allows you to say, “Oh, if this happens, I want you to go and do X.”I really see this application network as the nervous system for the enterprise. What it does is it normalises access to all data sets and all capabilities to the point where you can take an AI engine and just automate the outcomes much more easily.

I’ll give you a really basic example. [I’ve invested in a couple of startups] developing machine learning type of interfaces. They do these incredible demos where the language processing is unbelievable. They allow you to go: “Okay. Yep. That’s my policy. I’d like to renew it for another year. I also need to change my address.” And, “Okay, put your address in and then hit send.” They’re like, “See, we’ve done it.”

The problem is, in a company like a big insurance company, that address exists all over the place; it needs to be updated in 10, 12 places because there isn’t a single use of anything. And, without having that nervous system that knows where to find customer information and update customer information, plugging in new technologies and taking the manual processes out of that without it being heavily expensive is really difficult.

How Do You Tackle That?

We’re also investing in tools. We obviously have specialist integration tools for doing heavy back-end integration. But, we’ve got things like web-based flow designer tools for people who are looking for data sets, just to create them very easily in a web-based tool and make that available as an API and suck it into another application.

That should reach a point where anyone who’s an application user can do some basic integration tasks without having to really understand how integration works. A lot of our announcements around application network capabilities have been really around creating an enterprise graph of how things are connected.

So, we can, on our mobile app, understand when a request comes in, all the touch points it hits across the enterprise, and the back-end systems it hits. So you can map it out, which you couldn’t normally do. We can also do dependency analysis. So, if I’m gonna change a back-end system, we can now see upstream which other systems are depending on that data or that field.

What we’re moving towards is a model where connecting applications as easy as connecting friends on Facebook. The vision is that you should be able to plug in Salesforce, or plug in Dynamics. And we should know enough about the application to understand what you should do next with it. We know there’s customer data. We know there’s opportunity data. We know that 100 other customers have connected that to SAP objects over here. Do you want to go and do the same thing?

What Inspired Mulesoft’s Creation?

What Inspired Mulesoft’s Creation? Presumably There’s a Better Way…

Presumably There’s a Better Way…