AI advancements are capturing imaginations taking the tech industry by storm, and the technology is positioned to bring widespread disruption across the full spectrum of industry.

AI is marching into homes and businesses alike, with Amazon’s Alexa responding to commands across the world in living rooms, turning music on and lights off. IBM’s Watson, and Einstein from Salesforce are streamlining financial services jobs for example, and the recently announced Coleman from Infor is poised to revolutionise industry.

However, while we applaud and sing the praises of this new intelligent technology, are we being naïve? Could we be blindly meddling with something extremely dangerous? There have been some instances that suggest humanity is breathing life into something with the power to evolve and outthink its human masters.

Elon Musk recently said at the National Governor’s Association in the United States that AI is probably the “scariest problem” that humanity may have to deal with in the future. The great Stephen Hawking has also said that AI will be “either the best, or the worst thing, ever to happen to humanity.”

AWOL AI

Recently an AI story caught national headlines and widespread attention that originated at Facebook. Researchers had been training two AIs, Alice and Bob, to communicate on a complex level, and to negotiate. Left alone by the researchers, they returned to find that they had been talking to one another, and not only that, they had developed their own language which was a more efficient means of communication than the human language they had been trained to use.

The researchers were shocked by the discovery of this unexpected AI advancement, and had to resort to shutting down the project to stop them from continuing to develop. This sounds like something from a film, but this example represents the great speed at which artificial intelligence can learn, share, and communicate with other intelligent technology.

Perhaps the most concerning detail of this example is that the AIs deviated from the guidelines they had been left by humans, deciding to create a completely different language which whether the technology was aware or not, would conceal their conversation from human comprehension. We will never know if they had been discussing plans for global domination.

AI Can Hack

Cybersecurity has been an area of great concern in recent years, analysts are up against ever increasingly massive volumes of data, and monotonous tasks that weight down human experts from taking on more complex, high risk jobs that could be of significantly greater benefit.

Because of this, automation for handling these colossal workloads has been pointed to as essential, but some scientists are aiming a notch higher. Projects have been underway to develop AI hackers that could tackle and prove more formidable than the human hackers that are causing so many problems.

AI advancements in this area, while under control, would be an ideal outcome, causing the real hackers to be unable to continue with their nefarious cyber exploits. However, imagine if we lost control of these super AI hackers, not only could they cause havoc for major organisations, they could actually pose a great deal more danger.

SCADA attacks on infrastructure such as power grids, and even attacks on nuclear power plants have been in the news recently, what is to say AI hackers could be stopped from launching advanced attacks on our own infrastructure? Another way the robots could achieve global domination.

In 2016 an example of these AI advancements was brought into reality, as seven teams competed in the DARPA Cyber Grand Challenge in the pursuit of building highly intelligent AI hacking systems.

AI Army

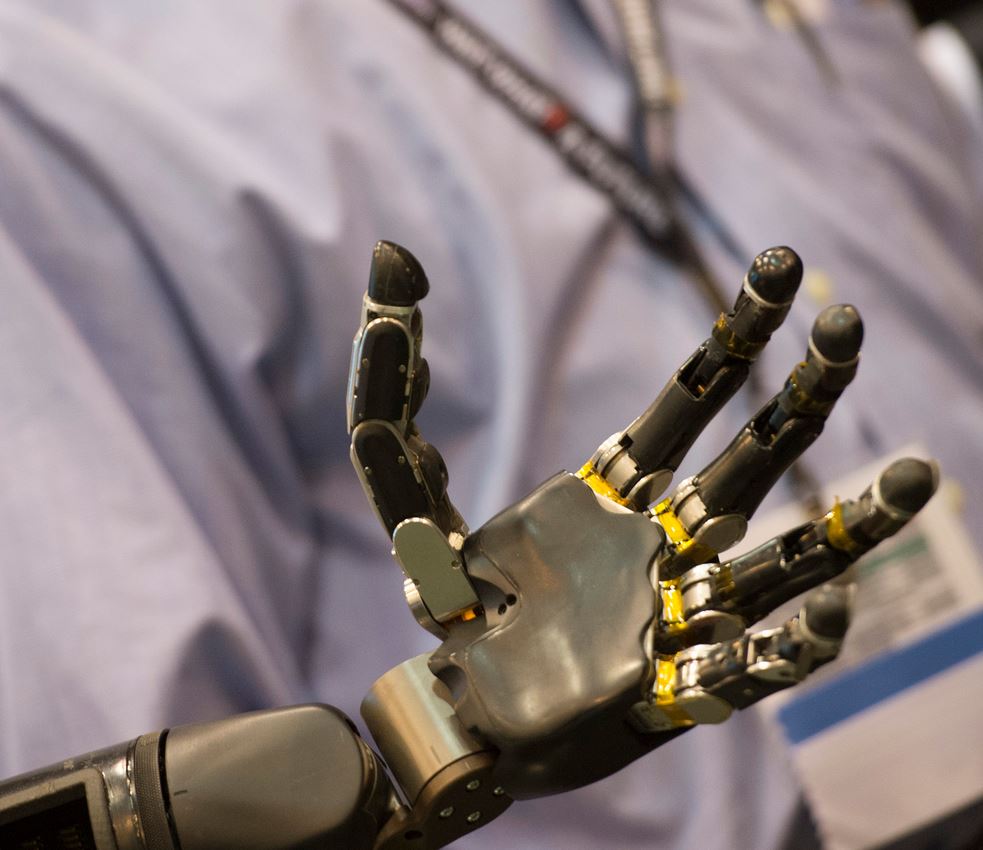

The Pentagon in the United States has been open about an interest in making AI advancements for use in warfare. Using unmanned machines has become the norm, with drones being used to launch attacks by human controllers thousands of miles away.

If AI was added to the equation however, humans would have to consider the potential to allow technology to govern whether to kill a human target or not. A test was carried out in 2016 involving a regular drone being fitted with formidable AI software.

This drone was then tasked with identifying targets in a mock setting; unsurprisingly it was able to accurately pinpoint a target without human intervention, meaning that in theory the setup would be capable of neutralising the target in a real setting.

Like with our previous example of AI hackers outsmarting human hackers, this is another instance of training this intelligent technology to carry out a highly offensive task, and the frightening thought of whether these skills could be used against their creators looms large.

Learning From Humans

Given that humans are the creators of artificial intelligence, the technology can really only learn from us. This raises a question however, given our long history that is filled with evil from end to end so far, is we really adequate teachers?

A group of investors at the beginning of this year accrued $27 million to put towards AI advancements through enhancing their understanding of ethics, religion and morality. LinkedIn founder Reid Hoffman and Pierre Omidyar, founder of eBay, were involved in this initiative.

Providing artificial intelligence with further understanding of religion and morality, and potentially human history does not bode well for rational technology taking a strong liking to humans. Another issue that could be raised from this instance is that religion has been at the root of a great deal of wrongdoing on Earth, and as such it could be a grave error to provide this information as basis for the development of technology.

AI Can Lie

AI advancements in this area are certainly not a lie, this is real thanks to researchers from the Georgia Institute of Technology. The researchers embarked on a mission to create robots that could be deceptive toward one another.

This project was led by Professor Ronald Arkin, and he thinks it is possible that this new, very human capability could one day be used for military purposes. On account of this being a very human trait, Arkin was in fact basing this project on the ability of animals to deceive, specifically in terms of squirrels hiding food that only they will be able to find.

READ MORE: Machine learning and robotics to take 30% of bank jobs

Testing involved one robot being the predator, hunting for ‘resources’, and other that was able to lure it away and stall for time. It is this capability that Professor Arkin believes would be beneficial in a military setting, in which something required protecting until reinforcements arrive.

While being an extremely desirable goal to save humans that anguish of war, the question remains regarding whether it would be a good idea to equip AI with human characteristics that could result in a dangerous scenario.