Nvidia has announced the Turing GPU architecture, an invention designed to make real-time ray tracing a possibility for professional users.

On Monday, the tech giant said that the eighth-generation graphics processing unit (GPU) design will focus on real-time graphical performance and rendering through the introduction of ray tracing.

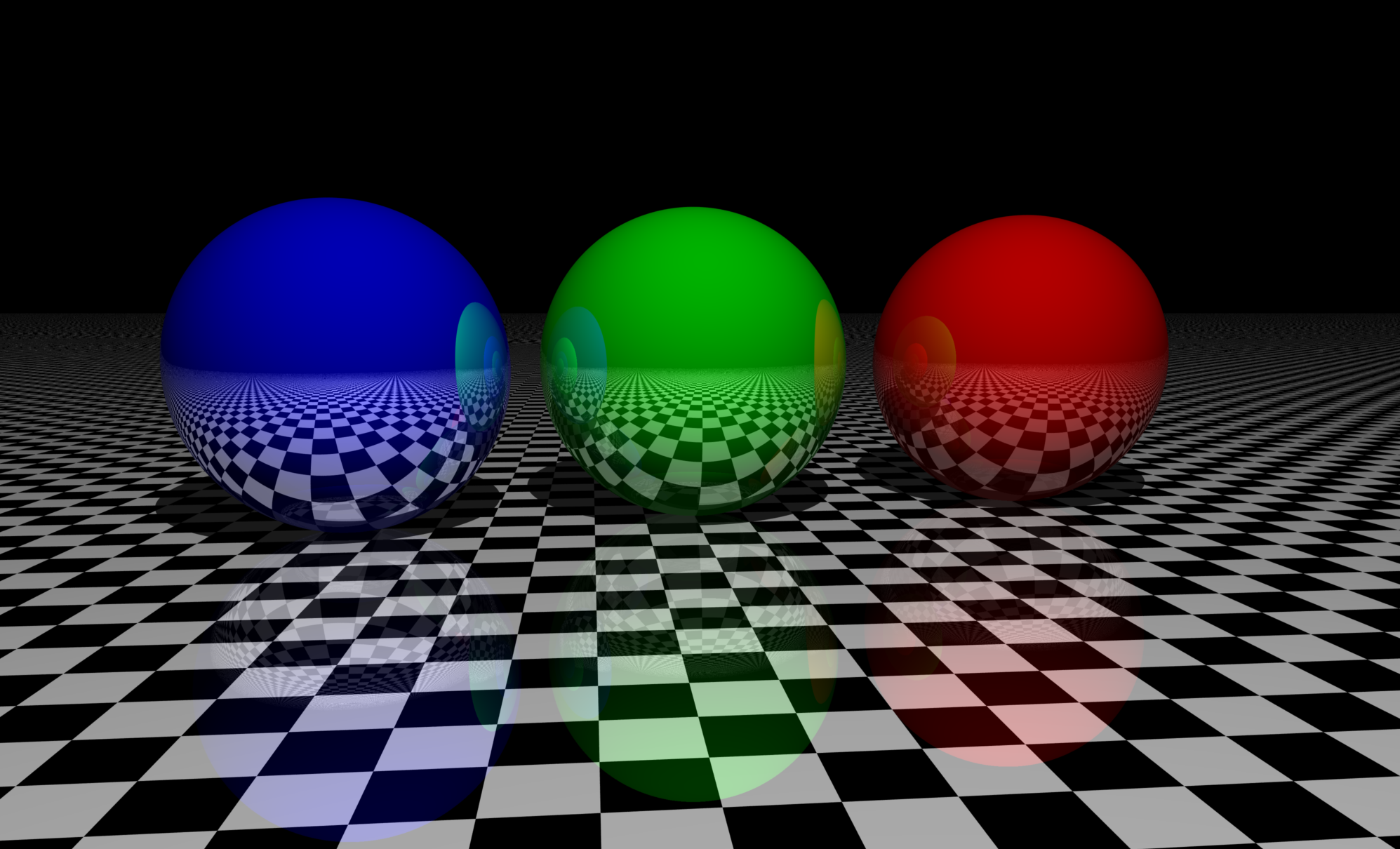

Modern graphics, game design, and professional rendering in both animation and photography have come in leaps and bounds in recent years. However, ray tracing has been touted as the next big graphical leap in design, illustration, and gaming.

Ray tracing is already used by movie and television content creators to create the CGI and high-quality visual effects we have come to expect in modern media. However, the process requires extensive server and processing power, which has proved to be a costly and time-consuming endeavor. At least, until now.

Nvidia CEO Jensen Huang announced the first Turing-based GPUs, the NVIDIA Quadro RTX 8000, Quadro RTX 6000 and Quadro RTX 5000, at the Siggraph professional graphics conference in Vancouver.

The company claims the Turing architecture is the “greatest leap since the invention of the CUDA GPU in 2006.”

“This fundamentally changes how computer graphics will be done, it’s a step change in realism,” Huang said. “It has to be amazing at today’s applications, but utterly awesome at tomorrow’s.”

The GPU family features RT cores which are dedicated to ray tracing processing, together with Tensor Cores for AI inferencing. The Tensor Cores provide up to 500 trillion tensor operations a second, which boosts AI-based denoising, resolution scaling and video re-timing.

Working Together

Together, this should permit ray tracing to be implemented in real-time and may also bring the technology to a wider pool of users.

Turing is touted as a way to speed up real-time ray tracing by up to 25 percent in comparison to older Pascal technologies utilized with the OptiX API, which is designed for commercial ray tracing applications. Rasterization may perform up to six times more efficiently.

“At some point you can use AI or some heuristics to figure out what are the missing dots and how should we fill it all in, and it allows us to complete the frame a lot faster than we otherwise could,” the executive said. “Nothing is more powerful than using deep learning to do that.”

In addition, Nvidia unveiled the Quadro RTX Server, a system equipped with eight Turing GPUs in order to cut down rendering times.

According to the company, a set of four Turing 8-GPU servers can do the work of 240 dual-core servers “at 1/4th the cost, using 1/10 the space, and consuming 1/11th the power,” while slashing rendering times from hours to minutes.

The new architecture has caught the interest of a number of vendors. Dell, HP, HPE, Lenovo, Fujitsu, Boxx, and SuperMicro, among others, have signed up to support the processors.

Quadro GPUs based on Turing will be available from Q4 2018. The Quadro RTX 5000 will be available for $2300, the Quadro RTX 6000 will be priced at $6300, and the Quadro RTX 8000 will be on the market for $10,000.