Impersonating someone is hardly a revolutionary type of fraud, but this summer Patrick Hillmann, chief communications officer at cryptocurrency exchange Binance, found himself victim of a new approach to spoofing – using an artificial intelligence (AI) generated video also known as a deepfake.

In August, Hillmann, who has been with the company for two years, received several online messages from people claiming that he had met with them regarding “potential opportunities to list their assets in Binance” – something he found odd because he didn’t have oversight of Binance’s listings. Moreover, the executive said, he had never met with any of the people who were messaging him.

In a company blog post, Hillmann claimed that cybercriminals had set up Zoom calls with people via a fake LinkedIn profile, and used his previous news interviews and TV appearances to create a deepfake of him to participate in the calls. He described it as “refined enough to fool several highly intelligent crypto community members.”

This high-tech incarnation of the well-known “Nigerian Prince” email scam could have proved costly for victims, and for cybercriminals the prospect can be an alluring one. Instead of putting resources into traditional forms of cyber attack like DDoS attacks or hacking into accounts, they can potentially create a deepfake of a well-known company executive replicating their image and, in some cases, voice.

Bypassing the conventional cybersecurity authentication defenses, the hackers can video call a company worker or even telephone them and request a transfer of money to a “company bank account.” In Binance’s case, fraudsters were promising a Binance token in exchange for some cash.

But despite their high profile, instances of confirmed deepfake cyberattacks are few and far between. And though the technology is becoming easier to access and deploy, some experts believe it will retain a complexity that puts it out of the reach of cybercriminals. Meanwhile experts are developing methods which could neutralise attacks before they begin.

Deepfake tools are becoming easier to use and less data-intensive

Henry Ajder is an expert on deepfake videos and other so-called “synthetic media”. Since 2019, he has researched the deepfake landscape and hosted a podcast on BBC Radio 4 on the disruptive ways these images are changing everyday life.

He found that the term “deepfake” originally surfaced on Reddit in late 2017, referring to a woman’s face being superimposed onto pornographic footage. But since then, he told Tech Monitor, it has expanded to include other kinds of generative and synthetic media.

“Deep faking voice audio is cloning someone’s voice either via text-to-speech or speech-to-speech, which is like voice skinning,” he explains. Voice skinning is when someone else layers a voice on top of their own in real-time.

Adjer continues: “There are also things like generative text models such as OpenAI’s GPT3 where you can write a prompt and then get a whole passage of text which sounds like a human has written it.”

While it has evolved as a term to encompass a wider meaning, Ajder says that the majority of deep fake content has “malicious origins”, and is what he would term image abuse. He adds that the increasing commodification of the tools used to create deep fakes means are easy to use and can be deployed via lower-power machines such as smartphones.

This evolution also means the end result is even more realistic. “You have this quite powerful triad of increasing realism, efficiency and accessibility.” Ajder says.

Deepfakes: fraud on steroids

What does this mean for businesses? While image abuse is more associated with private individuals, in the cybersecurity space Ajder says that there are an increasing number of reports of deepfakes being used against businesses, also known as “vishing.”

“Vishing is like voice phishing,” he says. “People are synthetically replicating voices of business leaders to extort money or to gain confidential information.” Ajder references that several reports have come from the business world which see millions of dollars syphoned away by people impersonating financial controllers.

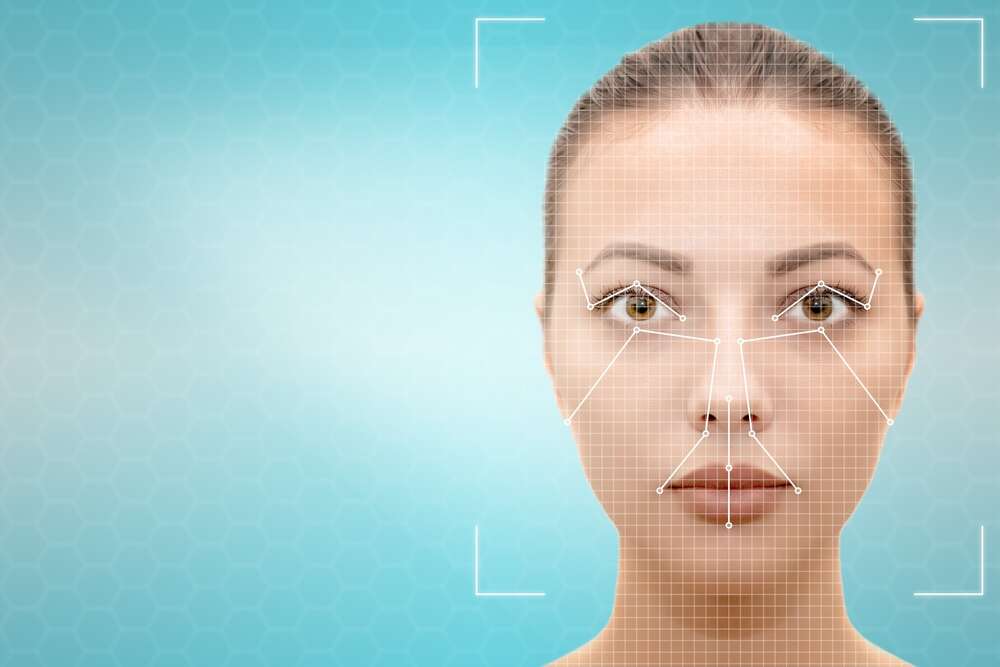

“Also, we’re seeing people increasingly using real-time puppeteering or facial reenactment,” Ajder told Tech Monitor. “This is the equivalent of having an avatar of someone whose facial movements will mirror my own in real-time. But obviously, the person on the other side of the call doesn’t see my face, they see this avatar’s face.”

This is the method thought to have been used to impersonate Binance’s Hillmann. Ajder describes the use of deepfakes in this way as “fraud on steroids” and says this is an increasingly common tactic deployed by cybercriminals.

Limited and conflicting information regarding deepfake cyberattacks

While there have been reports about the use of deepfakes and the opportunities they offer to cybercriminals, confirmed reports about their deployment remains limited.

David Sancho, senior threat researcher at Trend Micro, believes that the problem is a real one. “The potential for misuse is very high,” he says. “There have been successful attacks in all three use cases [video, image and audio] and in my opinion, we will see more.”

The researcher references one attack that took place in January 2020, which is being investigated by the US government. On this occasion, cybercriminals managed to convince an employee of a United Arab Emirates-based bank that they were a director of one of its customer companies by using deepfake audio, as well as forged email messages. The bank employee was convinced to make a transfer of funds of

However, Sophos researcher John Shier told Tech Monitor that there is no real indication that cybercriminals are using deepfakes “at scale”.

“It doesn’t seem like there’s a real concerted effort to include deep fakes in cybercrime campaigns,” he says.

Shier believes the complexity involved in creating a convincing deepfake is still sufficient to put many criminals off. “While it’s becoming easier every day, it’s still probably beyond most of the cybercriminal gangs to do at scale and at the speed that they’d like to versus simply just sending out three million bulky phishing emails all at once,” he says.

Academics develop ways to identify deepfakes

As deepfakes become more sophisticated, academics in the cybersecurity space are fighting back. A method developed by academics at New York University dubbed GOTCHA (the name is a tribute to the CAPTCHA system widely used to verify human users of websites) has been developed to try and identify deepfakes before they can do any damage.

The method incorporates requests for humans to perform tasks, such as covering their face or making an unusual expression which the deepfake’s algorithm is unlikely to have been trained on. However, the team behind the study notes that it could be “difficult” to get users “to comply with testing routines,” and also proposes automated checking via the ability to impose a filter or sticker on a stream to confuse the deepfake model.

Progress has also been made to detect audio deepfakes by researchers at the University of Florida. They have developed a way to measure the acoustic and fluid dynamic differences between voice samples created organically and those that are synthetically generated.

Trend Micro’s Sancho says criminals may find ways round such defences. “Bear in mind that there is more than one [type of algorithms], so if the results are not great with one, the attacker can fine-tune it or try with another one until the end product is convincing,” he continues. “Starting material can be chosen so that the final product is good enough; attackers are not looking for perfection, just to be convincing for whatever objective they’re after.”

Deepfakes have seen success already in business fraud – even more so in the form of romance scams and revenge porn – and academics clearly see it as a threat already. How long until we’re seeing vishing or face-swapping at the same scale as phishing campaigns?

“It’s crazy how far the technology has come in such a short period of time,” says Ajder. “If I’m trying to impersonate someone to get confidential information, to financially extort or scam, [cybercriminals] are going to require very little data to generate good models. We’re already seeing massive strides in this respect.”