Hewlett Packard Enterprise’s Discover event in Las Vegas showcased new software and hardware products, new customers as well as the company’s view of the long-term future of enterprise technology.

Executives offered changes to existing technology ready to ship now as well as a more detailed glimpse of HPE’s view of what business computing of the future will look like. The Machine is the company’s big idea of an entirely new, photonics-based computing infrastructure.

HPE CEO Meg Whitman also announced the company’s new partnership with Docker. This will see Docker’s ‘work anywhere’ code included in all HPE Servers. Docker lets enterprises create applications which will work on any platform.

The open source software will work across HPE’s portfolio including its upcoming composable infrastructure products.

Docker creates a container for applications allowing them to run more efficiently than on virtualised systems and use less resources. Data centres can see big savings from using containers in terms of hardware, software licensing and power and cooling costs.

Aside from costs, containers provide a strategic advantage in terms of speeding up deployment of new functions and applications.

Whitman predicted the deal would reduce the appetite and strategic importance of VMware investments.

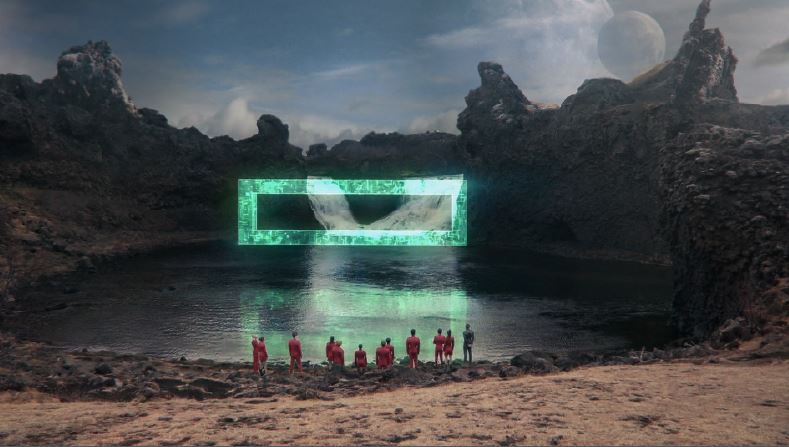

Looking quite literally into the future attendees in Las Vegas also heard about HPE’s work with Paramount Pictures on the upcoming Star Trek film – Star Trek Beyond.

The film will highlight possible use cases for ‘The Machine’ – HPE’s view of the photonic future of computing relying on light speed communication within and beyond computers in place of copper wires. This will allow the physical separation of different components of computing infrastructure like memory and processing power which today need to be very close to each other.

Back in the present HPE called on developers to start getting familiar with what will be an entirely new kind of computing infrastructure.

HP Labs is beginning to open up access to software which The Machine will run on in order to get developers up to speed with the change. The new infrastructure will create new opportunities for software developers.

The company said: “With the fundamental shift in how The Machine will work, it’s important that we invite open source developers very early in the software development cycle to start familiarizing with new non-volatile Memory-Driven Computing programming models.“

There will be more announcements in coming months but HPE Labs is making four sections available immediately.

These are a new database engine allowing the use of multiple processors and non-volatile memory, fault-tolerant, multi-thread programming to improve performance in the event of system crashes or power failures, an emulator for fabric attached memory and an emulator to check latency and bandwidth performance of commodity hardware.

For developers and enterprises more focussed on the present day HPE also revealed several updates to its Helion Cloud family – more details on that available here:

Meg Whitman also used the event to explain the thinking behind the spin-off and merger of HPE’s enterprise services arm with CSC. Whitman said the move would allow the company to better focus on providing solutions through its partners. It will create a services giant with predicted revenues of $26bn a year by next year.

Whitman contrasted with the move to create a smaller, faster and more nimble company with Dell’s debt-funded move to integrate storage giant EMC.

With this strategic change, HPE will be better able to concentrate on creating converged and hyper-converged systems for customers, Whitman believes.

HPE also used the Las Vegas event to unveil new ‘Internet of Things’ (IoT) technology which follow the firm’s vision of ‘Converged IoT systems’ which shift intelligence out of the data centre and ‘to the edge’ – where the devices and sensors are.

Mark Potter, SVP and Chief Technology Officer, HPE Enterprise Group, said: “There are going to be 26 billion devices connected, providing information and data—ranging from pumps to aircrafts to wind turbines. It’s not just about connected phones, laptops, and devices. The digitization of all that data, breaking it free from the silos it’s been in, and putting a business process around it is incredibly transformative for every industry.”

HPE Edgeline EL1000 and EL4000 systems provide data analytics and machine learning at the edge. They include data capture, management and analysis which in turn mean reduced data transmissions with a saving in those associated costs. They’re designed to put up with rough and ready environments they’re likely to be used in.

Delegates heard the example of a single blade on a single turbine on a wind farm which might create as much as 500GB of data every single day. With every turbine made up of three blades this would create an unmanageable amount of data to send back and analyse.

HPE systems promise to crunch those numbers at the edge and send back only useful, actionable knowledge to central systems.

Big data is the big promise behind IoT technology and HPE is convinced that dealing with that data will need to be done locally, not centrally, to provide systems which fulfil their promises.

To support this vision of the future the company also announced a partnership with GE Digital to include its Predix analytics platform on HPE IoT products.

GE provides software solutions for various vertical markets using IoT technologies. HPE will become a preferred partner to support GE Digital’s Predix while Predix becomes a preferred software partner for HPE’s IoT solutions.

Additionally HPE will seek to certify 100 Predix developers around the world.

HPE was also celebrating customer wins including moving some of Dropbox’s services onto HPE systems and away from Amazon Web Services.

Whitman said the move was an endorsement of HPE’s view that there were limits to the benefits of public cloud. She said issues of scale, of cost and of compliance meant that hybrid cloud was a more sensible solution for some companies.

Automation, software-defined storage and hyper-convergence were all mentioned as major customer drivers supported by the roadmap to composable infrastructure.

There’s more from HPE’s official blog here:

http://community.hpe.com/t5/Discover-Insider/bg-p/DiscoverInsider#.V1_dJJErK00

The company’s next big event is the Big Data Conference in Boston from 29th August to 1st of September 2016.

Star Trek Beyond image courtesy of Paramount Pictures