In the early 1970s, Chinese leader Zhou Enlai was asked to comment on the implications of the French Revolution. “It is too early to say,” he famously remarked, and his quote is often cited to illustrate the long sweep of history. In fact, he was commenting on the much more recent 1968 demonstrations.

He is not alone in being misunderstood. Language mishaps have been blamed for everything from wars to diplomatic blunders to advertising – and product-launch misfires. For global businesses, trying to crack new markets and manage far-flung workforces, language differences are an important, if rarely acknowledged, barrier to growth. It is estimated that there are more than 7,000 languages still spoken in the world today.

The average multinational company operates in 40 countries, and for some the number exceeds 200 – an indication of the sheer language diversity facing most large companies. Even if businesses have translation capabilities for the most widely used languages – English or Mandarin, for example – less widely spoken languages can still present a serious barrier to international growth.

Businesses are now turning to technology as part of the solution. Spurred by the shift to online remote work and teleconferencing during the pandemic, instant language translation (ILT) technologies have boomed over the past year. Zoom, the video-conferencing platform, recently announced its intention to acquire an AI start-up specialising in real-time language translation. Cisco Webex, the video-conference platform, is offering real-time translation in more than 100 languages ranging from ‘Armenian to Zulu’. iTourTranslator, an AI start-up based in Shanghai, provides real-time subtitled translation of video and telephone calls.

But how do these technologies work and to what extent can they really begin to crack the language barrier?

What is instant language translation technology?

At the heart of ILT technologies are AI-based systems that provide automated translation of written or verbal text from one language to another, using a mix of machine translation algorithms, voice recognition and optical character recognition technologies. In practice, a huge variety of applications and systems exist, some advanced and others still fledgling in nature. Some provide text translation, while others enable real-time verbal translation. The underlying technologies also vary. While nearly all use some type of machine learning, some applications use this in support of, or in combination with, human translators for added accuracy.

Some applications focus on specialized domains, such as military, legal or medical translation. Lingua Custodia, a machine translation company based in France, uses machine learning to translate financial documents such as annual reports, prospectuses, fact sheets, and legal and regulatory material. Dublin-based Iconic uses neural networks to translate hundreds of thousands of legal documents required as part of the discovery process by law firms around the world. Its machine translation service was able to translate more than 1,000 foreign language legal documents per day for its client Inventus, the global legal support services provider.

For businesses, ILT technologies promise smoother and faster international expansion to new markets, and more seamless global integration of workforces. Alibaba’s Dingtalk, for example, allows a multinational team to communicate and collaborate in their own languages, thanks to the AI translation feature. Fifteen million companies have joined DingTalk for work collaboration.

Daniel Wellington, a fast-growing Swedish watchmaker, used Unabel’s AI-based language translation algorithm to support its expansion to new markets. In particular, the algorithm helped the company’s sales agents to handle a surging volume of interactions in less common languages – such as Russian, Indonesia and Thai – for which there are few translator resources available.

The limitations of instant language translation

Despite the promise of ILT technologies, there is a significant problem to be reckoned with: communication is about much more than translation of text or speech.

First, context matters – greatly in some circumstances and for some languages. As humans, we recognise that a dinner date differs from a business meeting or a physician’s appointment, and adjust language, tone, and non-verbal cues accordingly. As Dr Ángeles Carreres, a linguistics expert at Cambridge University, said: “In some languages people say things explicitly – such as a direct refusal – while in others these actions are silenced or said indirectly”.

Second, by some estimates, 60 to 70% of communication is non-verbal – where meaning is supplied by tone, context and body language. According to Dr Elisabetta Adami, associate professor at the University of Leeds: “Communication is increasingly multi-modal. Gaze, gesture, and body language all convey meaning beyond words. In the digital era, new forms of communication are cropping up. Consider how, for example, we often respond to a message with a video, image, music or emoji.”

Dr Adami adds that such nonverbal communication in digital environments is even more crucial today, “given how much of our activities have moved online during the pandemic – a shift that is likely to remain also in the future”.

Communication is increasingly multi-modal. Gaze, gesture, and body language all convey meaning beyond words.

Dr Elisabetta Adami, University of Leeds

Given these limitations of culture and context, what kind of innovations could turn ILT technologies from tools of translation into tools of meaning and communication? Three improvements could make the difference.

The first major change would be greater calibration of context. “Humans can make sure the tone of translated language is consistent with the context,” explains Dr Carreres. “If we were able to select the context for machine translation – a dinner gathering as opposed to a business meeting, for example – we can start to solve the lost-in-context issue in machine translation.”

But the real leap would be algorithms that can do this automatically. Already, systems such as Google Neural Machine Translation can translate an entire sentence as a single unit of translation (i.e., not just a word-by-word translation). This can reduce translation errors by 60% compared to phrase-based translation. But, as Dr Marcus Tomalin, an expert on machine translation at the University of Cambridge, explained: “The current generation of translation systems can process only one sentence at a time. Machines cannot retain the information that was acquired from previous sentences.” What’s really needed are systems with a longer memory, capable of processing multiple sentences together to extract meaning and context more finely.

The second major change would be a greater capacity to recognise and respond to emotional cues. A frown, a smile, a quizzical glance – gestures can reveal our true emotions in a way words never can. Being able to combine language translations with emotion-tracking technology would be a significant step forward.

Prof Luis Pérez-González of Manchester University said: “In the field of audio description for visually impaired readers, we are seeing experimental research using electrodermal activity to measure viewers’ emotional reaction. I can see the possibility to combine this research with translation to understand which type of audio description translation would resonate with them, e.g., factual descriptions or culturally adapted descriptions.”

Significant strides are being made in the separate field of emotional AI, using facial recognition, eye movements or oxytocin measurements to help track people’s emotions in real time. Google is attempting to fuse machine translation and emotional AI through Translatotron, a speech-to-speech translation model that retains the original speaker’s vocal characteristics (for example, tone and emotion), in translated speech. But one can imagine further possibilities as the technologies mature: a remote medicine video consultation where a patient’s smartwatch changes ‘I’m ill’ to ‘I’m in serious pain’, or oxytocin monitors that show the overseas customer is rapidly losing interest, despite the friendly language translations.

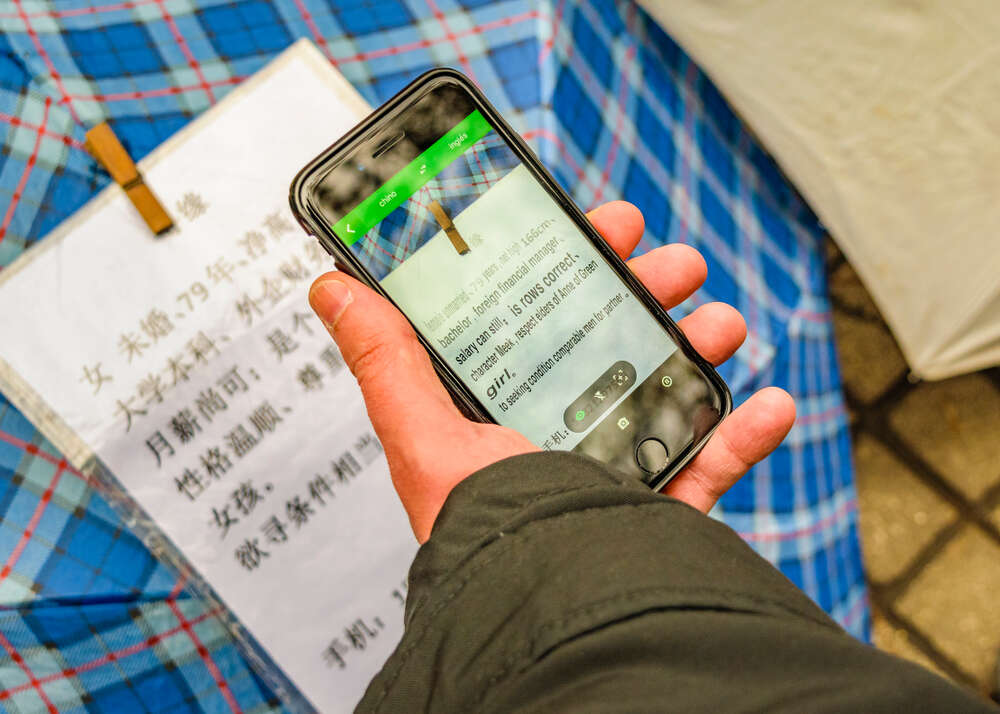

The final frontier is translation of visual images. Not the accompanying text, but the images themselves. This seems counter-intuitive, as surely an image – of an overseas holiday destination, for example – doesn’t change with translation. But, as marketers and advertisers have long known, the meaning can and does change, depending on the viewer, which is why many spend a lot of time and money adapting visual campaigns to different markets.

Consider, for example, the Visit Britain website. It leads with images of natural scenery for Dutch visitors, with pictures of afternoon tea and ice cream cones for the Chinese version, and with a panoramic view of Big Ben and the London Eye for the US English version. Or consider China’s growing Dan Mu or Barrage’ culture, where hundreds of users overlay their own interpretations of films, music or games in real time. Algorithms that can accurately infer visual meaning, even in dynamic contexts, would be a game-changer for the technology.

Whether it is new commercial opportunities, or new experiences and ways of thinking, the world has so much to say. ILT technology can get us talking again. With more attention to culture and context, it might even help us understand each other better too.