Google’s advances with its Artificial Intelligence chips, called TPUs, have helped to remove the need for it increase its data centre footprint.

While fellow cloud players Amazon Web Services and Microsoft have been aggressively launching new cloud regions, supported by a mixture of newly built and collocation data centre projects, Google Cloud Platform appears to have removed this need thanks to a Tensor Processing Unit (TPU). Although the company already revealed plans last year to open more data centres.

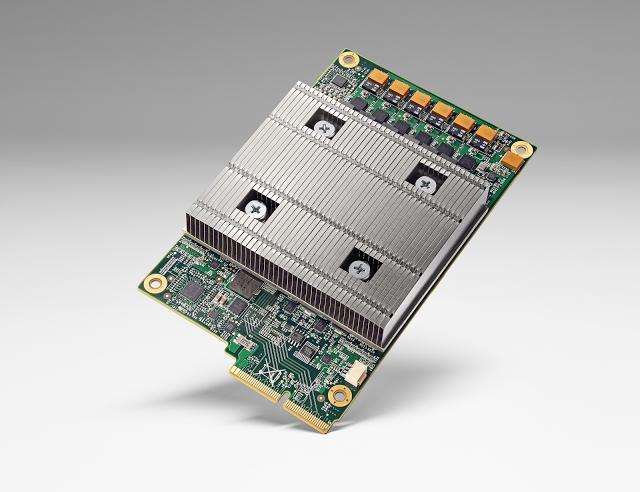

The project started several years ago as a way to develop a custom ASIC, application specific integrated circuit, for machine learning workloads.

The TPU’s have now been in use in Google’s data centres for more than a year and are said to: ”deliver an order of magnitude better-optimized performance per watt for machine learning,” the company said on its blog.

Benchmarks revealed by the company show that a TPU is between 15X to 30X faster than current GPUs and CPUs in the market.

The company said: “The need for TPUs really emerged about six years ago, when we started using computationally expensive deep learning models in more and more places throughout our products. The computational expense of using these models had us worried.

“If we considered a scenario where people use Google voice search for just three minutes a day and we ran deep neural nets for our speech recognition system on the processing units we were using, we would have had to double the number of Google data centers!”

In addition to improving the speed of operation, the TPUs are said to operate at a much better energy efficiency than conventional chips, achieving 30X to 80X improvement in TOPS/Watt measure.

If that wasn’t enough to make the technology sound impressive, Google said that the neural networks powering the applications require just 100 to 1500 lines of code, which is based on TensorFlow.