AWS has turbocharged its custom Arm-chips, unveiling a new set of cloud instances underpinned by homegrown AWS Graviton2 processors that it says are seven-times faster than last year’s Arm-based offering; putting Intel and NVIDIA firmly on notice.

The release comes a year after the cloud heavyweight first touted a family of A1 Arm-based cloud instances, using processors custom-designed by AWS using open Arm architecture. (Arm-based servers are significantly more energy efficient — an incentive for AWS, which is paying the power bills — but have lacked firepower).

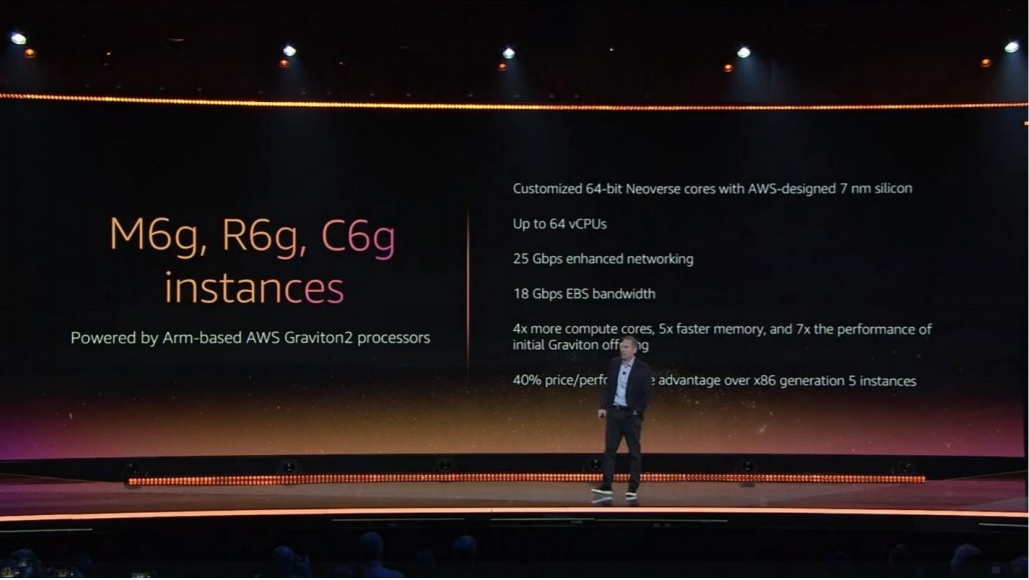

The Graviton2 processor, revealed today at AWS’s Re:Invent conference, is an upgraded custom AWS design built using a 7nm manufacturing process. It is based on 64-bit Arm Neoverse cores, and doubles floating point performance with additional memory channels and double-sized per-core caches boosting memory access a further five-times.

Arm engineers worked side by side with Annapurna Labs, a wholly owned AWS subsidiary responsible for building the Graviton processor family to design the chips, which are the first built using Arm’s N1 architecture; its first extensive CPU core roadmap aimed specifically at infrastructure.

AWS CEO Andy Jassy told the audience in his keynote speech at the Las Vegas event that users can “run virtually all your instances on this, with a 40 percent better price performance than x86 instances.”

AWS will be switching a range of its own IaaS/SaaS service from x86-based architecture to Arm-based architecture it added.

This thing kicks the living crap out of the current Intel powered c5/m5 instance families.

It's time for the world to learn that "cross-compiling" isn't about you using your computer when you're angry.

Well, it is, but it doesn't start that way. #reinvent pic.twitter.com/NC27slRG7V

— Corey Quinn (@QuinnyPig) December 3, 2019

He added: “We had three questions we had when we lanched A1 instances: 1) will anyone use them? 2) Will the partner ecosystem step up? 3) Can we iterate enough on this first Graviton family?”

The answer to all of these questions has been a firm “yes” he said (pointing to partners/users including Red Hat, CBS and Datadog).

Initial AWS benchmarks show the following per-vCPU performance improvements over the M5 instances, the company claimed.

- SPECjvm® 2008: +43% (estimated)

- SPEC CPU® 2017 integer: +44% (estimated)

- SPEC CPU 2017 floating point: +24% (estimated)

- HTTPS load balancing with Nginx: +24%

- Memcached: +43% performance, at lower latency

- X.264 video encoding: +26%

- EDA simulation with Cadence Xcellium: +54%

The new service will come in three iterations dubbed M6G (1-64 vCPUs and up to 256 GiB of memory), R6G (1-64 vCPUs and up to 512 GiB of memory) and C6G (1-64 vCPUs and up to 128 GiB of memory).

The latter two will available early 2020 and the first available now.

Graviton2 and New Inf1 Instances

Jassy also showcased new “Inf1 Instances” designed for machine learning/AI workloads and again powered by customer Arm-designs.

These demonstrate 3X higher throughput than G4 instances powered by NVIDIA chips, he said, and cost a claimed 40 percent less. (The offering comes integrated with TensorFlow PyTorch et al.)

AWS plans to use these instances to power Amazon EMR, Elastic Load Balancing, Amazon ElastiCache, and other AWS services.

Memory on the instances meanwhile is encrypted with 256-bit keys that are generated at boot time, and which never leave the server, AWS added.

See also: If Moore’s Law’s Dead, What Now for Silicon Valley? The CEOs of Arm, Micron, Xilinx Have their Say

More to follow.