Distributed computing start-up Monster API believes it can deploy unused cryptocurrency mining rigs to meet the ever-growing demand for GPU processing power. The company says its network could be expanded to take in other devices with spare GPU capacity, potentially lowering the cost of developing and accessing AI models.

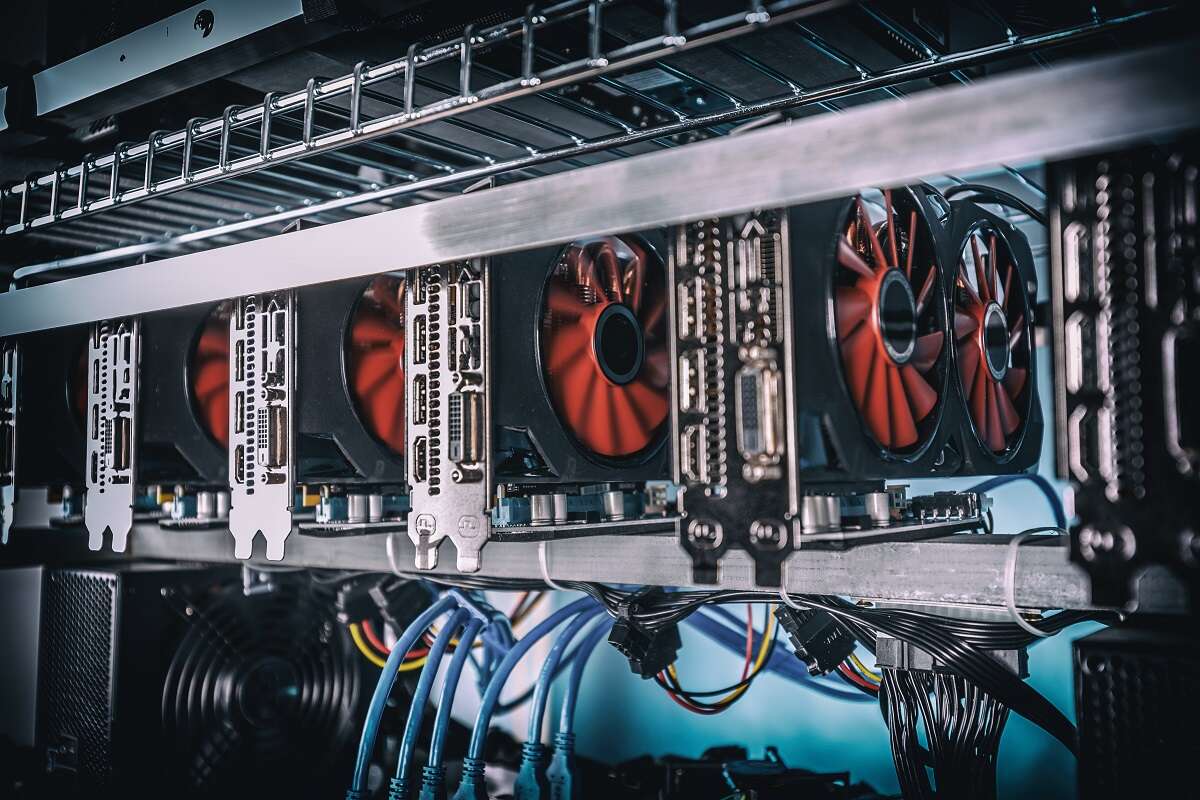

GPUs are often deployed to mine cryptocurrencies such as Bitcoin. The mining process is resource-intensive and requires a high level of compute, and at peak times in the crypto hype cycle, this has led to a shortage of GPUs on the market. As prices rocketed, businesses and individuals turned to gaming GPUs produced by Nvidia, which they transformed into dedicated crypto-mining devices.

Now interest in crypto is waning, many of these devices are gathering dust. This led Monster API’s founder Gaurav Vij to realise they could be re-tuned to work on the latest compute intensive trend – training and running foundation AI models.

While these GPUs don’t have the punch of the dedicated AI devices deployed by the likes of AWS or Google Cloud, Gaurav says they can train optimised open source models at a fraction of the cost of using one of the cloud hyperscalers, with some enterprise clients finding savings of up to 80%.

“The machine learning world is actually struggling with computational power because the demand has outstripped supply,” says Saurabh Vij, co-founder of Monster API. “Most of the machine learning developers today rely on AWS, Google Cloud, Microsoft Azure to get resources and end up spending a lot of money.”

GPUs to provide additional revenue for data centres?

As well as mining rigs, unused GPU power can be found in gaming systems like the PlayStation 5 and in smaller data centres. “We figured that crypto mining rigs also have a GPU, our gaming systems also have a GPU, and their GPUs are becoming very powerful every single year,” Saurabh told Tech Monitor.

Organisations and individuals contributing compute power to the distributed network go through an onboarding process, including data security checks. The devices are then added as required, allowing them to expand and contract the network based on demand. They are also given a share of the profit made from selling the otherwise idle compute power.

While reliant on open-source models, Monster API could build its own if communities funding new architectures lost support. Some of the biggest open-source models originated in a larger company or major lab, including OpenAI’s transcription model Whisper and LLaMa from Meta.

Saurabh says the distributed compute system brings down the cost of training a foundation model to a point where in future they could be trained by open-source and not-for-profit groups and not just the large tech companies with deep pockets.

“If it cost $1m to build a foundational model, it will only cost $100,000 on a decentralised network like us,” Saurabh claims. The company is also able to adapt the network so that a model is trained and run within a specific geography, such as the EU to comply with GDPR requirements on data transmission across borders.

Monster API says it also now offers “no-code” tools for fine-tuning models, opening access to those without technical expertise or resources to train models from scratch, further “democratising” the compute power and access to foundation AI.

“Fine-tuning is very important because if you look at the mass number of developers, they don’t have enough data and capital to train models from scratch,” Saurabh says. The company says it has cut fine-tuning costs by up to 90% through optimisation, with fees around $30 per model.

Monster API says it can help developers innovate with AI

While regulation looms for artificial intelligence companies, which could directly impact those training models and open source, Saurabh believes open-source communities will push back against overreach. But Monster API says it recognises the need for managing “risk potential” and ensuring “traceability, transparency and accountability” across its decentralised network.

“In the short term, maybe regulators would win but I have very strong belief in the open source community which is growing really really fast,” says Saurabh. “There are twenty-five million registered developers on [API development platform] Postman and a very big chunk of them are now building in generative AI which is opening up new businesses and new opportunities for all of them,”

With low-cost AI access, Monster API says the aim is to empower developers to innovate with machine learning. They have a number of high-profile models like Stable Diffusion and Whisper available already, with fine-tuning accessible. But Saurabh says they also allow companies to train their own, from scratch foundation models using otherwise redundant GPU time.

The hope is that in future they will be able to expand the amount of accessible GPU power beyond just the crypto rigs and data centres. The aim is to provide software to bring anything with a suitable GPU or chip online. This could include any device with an Apple M1 or later chip.

“Internally we have experimented with running stable diffusion on Macbook here, and not the latest one,” says Saurabh. “It delivers at least ten images per minute throughput. So that’s actually a part of our product roadmap, where we want to onboard millions of Macbooks on the network.” He says the goal is that while someone sleeps their Macbook could be earning them money by running Stable Diffusion, Whisper or another model for developers.

“Eventually it will be Playstations, Xboxes, Macbooks, which are very powerful and eventually even a Tesla car, because your Tesla has a powerful GPU inside it and most of the time you are not really driving, it’s in your garage,” Saurabh adds.