Microsoft and chipmaker Qualcomm are creating new ‘on-device’ AI models on Snapdragon processors running Windows 11, potentially paving the way for local and offline access to generative AI capabilities such as image and text generation. It comes as Microsoft confirmed it was bringing its GPT-4-powered Copilot AI into Windows 11.

Announced during Microsoft’s Build developer conference, Qualcomm says its offline AI models are designed to reduce the load on cloud providers and reduce the cost of generative AI by bringing some of the processing to the edge. The company says it would allow for “more affordable, reliable, and private” generation.

Also announced during Build was tighter integration of Microsoft’s Copilot AI system with its full range of products including Windows 11 and Microsoft 365. It will sit in the Windows sidebar and work in a similar way to Bing AI chat. Users will be able to type questions or ask it to complete tasks within the operating system such as changing desktop backgrounds. Bing search, and the ability to browse the web, is also coming to OpenAI’s ChatGPT, which currently runs off a fixed dataset rather than being able to search up-to-date sources.

While all of those models currently rely on access to the internet and expensive cloud services, provided through Microsoft’s Azure platform, future versions may be more local.

Qualcomm has been developing an “AI Stack” that integrates with its Snapdragon processors to allow for more scalable foundation AI models. The company says it will allow OEMs and developers to distribute AI applications without relying on cloud providers.

Qualcomm Snapdragon’s AI advantage

“For generative AI to become truly mainstream, much of the inferencing will need to be executed on edge devices,” said Ziad Asghar, senior vice president of product management at Qualcomm.

It is expected there will always be a hybrid approach of cloud and on-device processing for AI systems, but being able to add the on-device element is more cost-effective. Qualcomm says its AI Engine processes workloads more efficiently than running on a GPU or CPU, which in turn allows them to be run on small, thin and lightweight devices including phones, laptops and tablets.

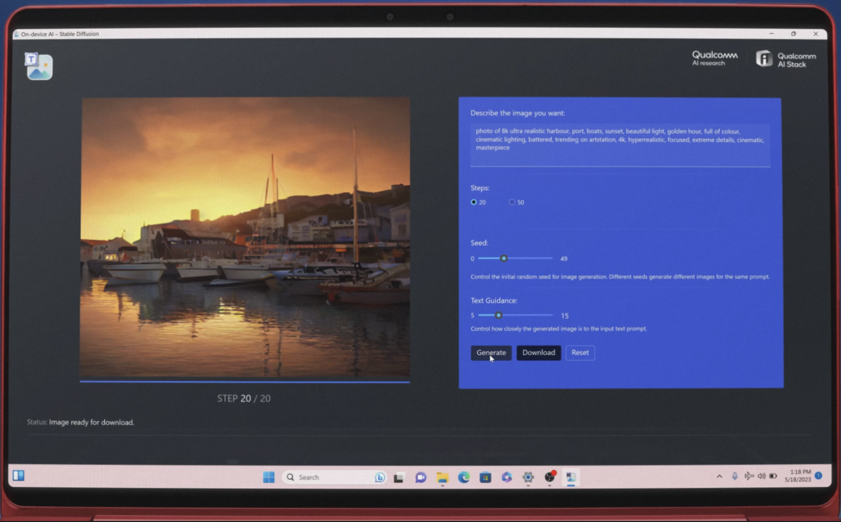

Qualcomm displayed a range of tools for developing generative AI inside Windows 11 on device, including the Stable Diffusion text-to-image AI model. A version with more than a billion parameters is successfully operating ‘on-device’ and it has a path for 10 billion-plus versions of the model. It is also working on bringing large language models to the edge.

“Both cloud and device processing are needed to extend AI across the vast universe of devices and applications,” said Pavan Davuluri, corporate VP, Windows silicon and system integration at Microsoft. “By bringing together Microsoft’s cloud AI leadership and the capabilities of the Windows platform with Qualcomm Technologies’ on-device AI expertise, we will accelerate the opportunities for generative AI experiences.”

Locally running AI models aren’t a new thing. There is a large open-source developer community working with versions of Meta’s leaked LlaMA large language model and having it operate on old laptops. Other groups are building text-to-speech and text-to-image models but these tend to have significant file sizes or require a good graphics card. Qualcomm is aiming to create solutions that work more seamlessly and efficiently.

It’s “no secret” that Qualcomm and Microsoft have been working closely on silicon for some time says Geoff Blaber CEO at analyst company CCS Insight. “A big driver behind that is not just what Qualcomm can deliver in terms of Arm-based CPU power and efficiency but its roadmap for AI acceleration,” Blaber says. “With the announcement of Windows Copilot, it’s clear that Microsoft is embracing AI at the heart of all its tools and platforms and for that it has very specific requirements of the underlying silicon.”

Blaber adds: “Microsoft’s vision for generative AI at the heart of its tools and platforms can’t scale if it depends solely on the cloud. It would be orders of magnitude too expensive, highly inefficient and the user experience would fall short. We’re going to see a blend of AI running on-device, in the cloud and a hybrid combination of the two. On-device generative AI isn’t feasible on mass-market Windows hardware today, but it’s a clear priority and direction of travel for the Windows ecosystem. This is the real basis for Microsoft’s close cooperation with Qualcomm.”

Microsoft beefs up AI offering with Build announcements

Build is Microsoft’s developer conference, and AI was at the core of many of its new products. A new Azure AI Studio will make it easier to integrate external data sources into the OpenAI APIs available through the Azure cloud. OpenAI’s APIs are also being made more widely available to users of the platform. Developers will be able to create plugins to integrate their tools, data and services into any Microsoft product running Copilot, which includes Windows and Office applications.

The plugins are a result of the plugin standard developed by OpenAI for ChatGPT. This means any plugins built for ChatGPT will also work across all Microsoft products including Teams. “Developers can now use one platform to build plugins that work across both consumer and business surfaces, including ChatGPT, Bing, Dynamics 365 Copilot (in preview) and Microsoft 365 Copilot,” a spokesperson for Microsoft said.

The same plugins currently available for paying users of ChatGPT will be added to Bing including OpenTable, Wolfram Alpha and Klarma. In return, Microsoft is adding its own Bing search functionality to ChatGPT. “Now, answers are grounded by search and web data and include citations so users can learn more, all directly from within chat,” said Microsoft.

An AI-powered analytics platform called Fabric for enterprise-grade data was also launched at the conference. This brings together existing tools including Power BI, Data Factory and Synapse into a single product.