It began with a confession. In March, the military junta in Myanmar released a video of one of their political prisoners, Phyo Min Thein. Former chief minister of the Yangon region, Thein was filmed sitting behind a desk while calmly explaining how he bribed Aung San Suu Kyi for political favours. “I gave her silk, food, US dollars and gold bars,” he said, all hidden inside “appropriate shopping bags.”

The video’s release coincided with a wider campaign by the junta to discredit the democratic government it had overthrown in February. On those grounds alone, audiences had reason to doubt anything Thein was saying, along with the fact that for most of the video the ex-governor appears to be reading from a script. Many other viewers, however, questioned whether the person in the video was really Thein. The voice, said some, didn’t sound like his. “Only the mouth and eyes move, not the body or the hands,” said another.

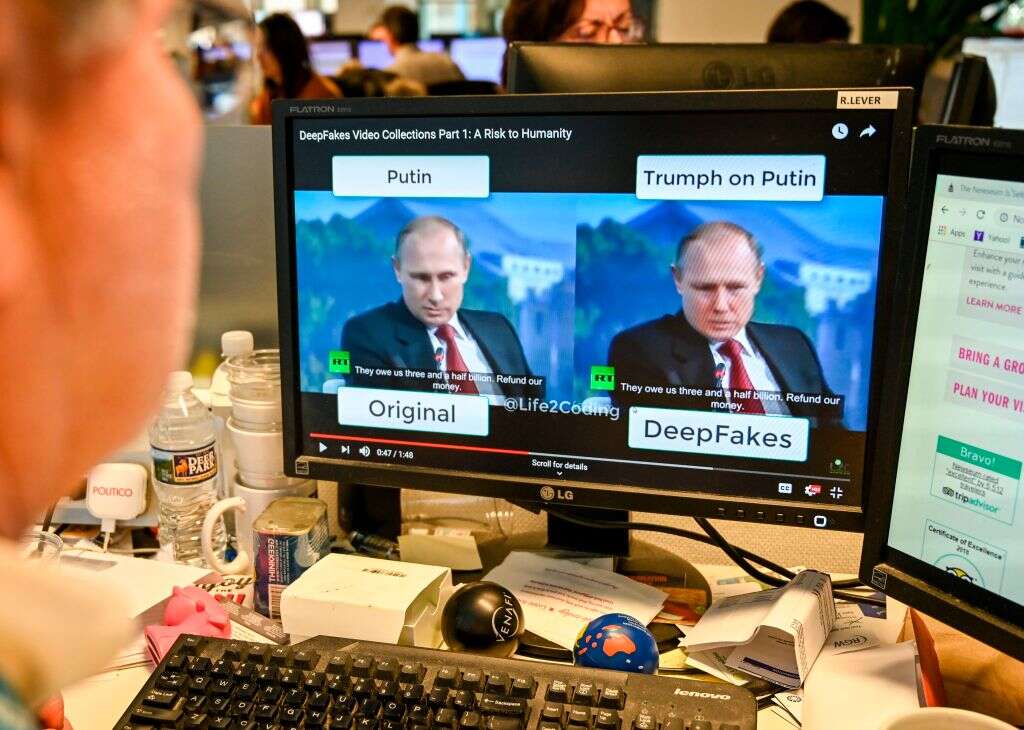

The conclusion seemed obvious, then: Thein’s confession was an elaborate deepfake. It was a scenario experts in the field of image manipulation had been warning about for years. The ability to use general adversarial networks (GANs) to convincingly transplant one person’s face onto another’s, they said, would eventually lead to political disinformation. As the phenomenon took hold among machine learning forums in the late 2010s, organisations like Sensity, Sentinel and later Quantum Integrity emerged with a mission to raise awareness of the threat and come up with methods capable of automatically detecting deepfakes at scale.

These start-ups have succeeded in exposing the spread of malign deepfakes, used in everything from celebrity-themed pornography to blackmail, revenge porn and fraud. Until recently, these creations were fairly easy to spot. Even some of the more sophisticated models, including a popular series of deepfakes of Tom Cruise, contain obvious visual artefacts around the edges of the face. If these flaws aren’t spotted first by the human eye, they’re caught by machine learning algorithms trained to identify deepfakes by analysing a vast corpus of real and fake media.

Even the latest models, however, couldn’t agree on whether the video of Thein was your typical forced confession or something more advanced. Detection software operated by Deepware concludes with a 98% certainty that the video was a deepfake, while Sensity’s database alleges that it isn’t. Meanwhile, digital forensics experts tapped by Witness, a non-profit that supports human rights causes with video technology training, couldn’t be sure. “The case in Myanmar demonstrates the growing gap between the capabilities to make deepfakes, the opportunities to claim a real video is a deepfake, and our ability to challenge that,” wrote its programme director Sam Gregory in a recent opinion piece for Wired.

The Thein video isn’t an isolated case. In June 2020, Facebook released the results of a competition with a $500,000 prize for the most accurate deepfake detection software. The best out of some 35,000 models was only 65.18% accurate in the wild. “That’s just not robust enough to be deployed at scale, and certainly not in a critical context,” says deepfake expert Henry Adjer. Indeed, the growing visual sophistication of deepfakes has left Adjer increasingly uncertain whether current approaches to tackling them are on the right track.

“Deepfake detection has a role, but it’s an incredibly challenging technology to get right,” he says. “And I think we do need to have a little bit more nuance when thinking about other approaches that we could be engaging in to tackle the problem.”

Morality play

Deepfakes have had malign use cases since their inception. The term was first used in 2017, when a Reddit user posted videos where the faces of several Hollywood actresses had been superimposed onto pornographic performers without their consent. This application has endured, with one report by Sensity estimating that such videos constitute almost 96% of all deepfakes. Other criminal applications “are starting to appear,” explains Dr Pavel Korshunov of the Idiap Research Institute, including blackmail, sexual abuse and fraud.

These threats, combined with the technology’s much-vaunted potential as a disinformation vector, has prompted social media giants like TikTok and Facebook to prohibit deepfakes on their platforms and explore how to set up a robust and automatic detection architecture.

Most malign deepfakes are produced using two methods. The first models, similar to those found in novelty apps like Reface or Wombo, require a minimum of input data – sometimes just a single photo – to map one face onto another, sometimes in real time. While this method is easier for quick and nasty attacks, the videos that result often contain obvious visual artefacts. This is less true of higher-end, offline deepfake models, which require more examples for the model to learn from and take longer to produce a convincing imitation, but are nonetheless operated by individuals trained in machine learning and VFX who have the time to blend out remaining flaws frame by frame.

It is these flaws that most deepfake detectors are looking for. There is “a huge body of work that tries to detect deepfake attacks using machine learning,” explains Dr Yisroel Mirsky of Ben-Gurion University. These neural networks “use some sort of classifier to look at the image and make a prediction based off the content as to whether this image is a deepfake or real.” Some detectors concentrate on more obvious flaws, shading errors around the periphery of the face, like the neck and hair. As creators have grown more adept at blending these out, however, certain models have started to search for more obscure deviations, like the reflection in the eyes, strange body postures or tiny movements on the face indicating a pulse.

This is the approach used by Quantum Integrity (QI), one of a growing number of deepfake detection start-ups. “We use GAN networks to detect them,” explains CEO Anthony Sahakian. Founded in February, the company has a laser focus on cornering the fraud end of the detection market: manipulated photographic inventories and insurance claims, biometric spoofers and Zoom-bombers. “We have a very plastic detector,” says Sahakian. “It’s easily and quickly retrainable for these use cases.”

It has to be. GANs are effectively two AI models in one: a forger and a detective. The forger is tasked with producing an imitation of something so convincing that, after much trial and error, will fool the detective. Aside from being a great way of producing deepfakes, the model can be easily tweaked. If a developer learns, for example, that a deepfake detector is looking for a specific visual artefact, then they can ‘instruct’ the GAN to compensate. This is why the code for many of the more sophisticated detection models remains private. “It’s a constant cat and mouse game,” says Sahakian.

And it seems the advantage may soon be with the attackers. In February, a team of researchers from UC San Diego illustrated how vulnerable deepfake detectors are to ‘adversarial attacks.’ By injecting patterns of interference at the pixel level, they later successfully confused the top three detectors in Facebook’s Deepfake Detection Challenge. “If the detector is not aware that there could be some sort of perturbation – which, in most cases, it is not – it’s very easy to fool them,” explains Shehzeen Hussain, one of the study’s co-authors. “You can bypass a lot of these systems with these attacks.”

Reasonable doubt in detecting deepfakes

Sahakian is aware of these threats. “We’re not looking for anything that has to do with the image itself,” he says, adding – a little mysteriously – that QI’s detection model looks “into what’s been done to the image behind the pixel level” to make its decisions. But does the company know why those decisions are made by its detector? Sahakian laughs. “No, we can’t. It’s a black box.”

QI is hardly alone in this problem, with one recent study complaining that ‘most state-of-the-art deepfake detections are based on black-box models.’ This has profound implications for the use of detection software in critical use cases. The verdict of such programs could, for example, be the deciding factor behind the prosecution of an individual for sexual abuse or copyright fraud. Similarly, a lawyer for the defence may end up convincing a judge that a verdict should be thrown out for lack of certainty about why it came to such a decision.

Another solution, however, promises to attack this problem of certitude in a much simpler, and on the face of it, more effective way. At Microsoft, a team of developers is working with publishers including the BBC, CBC, and The New York Times to create a chain of trust for all audio and visual content passing through their respective organisations. “You can think of it very simplistically as a digital signature on top of a piece of media that flows through a distribution network,” explains Microsoft engineer Paul England.

Known as ‘Project Origin,’ the scheme’s goal is to eventually allow for this signature to include information about how the piece of media has been edited over time. Needless to say, this not only comes with significant challenges around compression, but also institutional buy-in. England is confident, though, that that problem has started to be solved by bringing Origin under the auspices of the Coalition for Content Provenance and Authenticity (C2PA), a contents standards authority that not only includes Microsoft but also Twitter, Adobe and semiconductor giants Intel and Arm.

“C2PA is trying to set up a minimal set of interoperable data structures,” says England, which would not only work for authenticating media passing through trusted publishers, but also has the potential to impose provenance information for all forms of audio and video from the moment they’re created. This, the engineer recognises, is bound to create its own problems. C2PA is working closely with Witness, for example, on how to balance the need for provenance information with the anonymity of whistleblowers, particularly those attempting to release video evidence of corruption under repressive regimes.

England is confident that the C2PA’s work can begin to build a chain of trust for visual media within the next couple of years. In the meantime, he predicts that the detection architecture for deepfakes will hold. "My sense is that, right now, deepfakes are imperfect and there is a window where tools that are trained to look for these imperfections are providing some use and some value,” says England. Even so, that window is shortening. “This is sort of like an enhanced Turing test, right? Can [developers] fool both people and machines with their deepfakes? And I think the deepfake creators are well on the way to doing that.”

Indeed, it may be the case that chains of trust may not arrive before deepfakes profoundly change our relationship with audio and video. The technology is growing more popular by the day. In April, novelty deepfake app Reface claimed installs had topped 100 million, while synthetic audio remakes of pop hits are threatening to become their own musical sub-genre. Developments like these give Adjer pause for thought on how any future detection architecture should set its priorities. “It’s unclear what use knowing something is synthetically manipulated going to be at scale, when so much content is manipulated in some way,” he says.

Basic enforcement of personal image rights, then, may take a back seat to new efforts to hold back an inevitable tidal wave of racist, sexist and violent imagery created using deepfakes. Then there’s the potential havoc that the technology could cause on our basic trust in visual media. The result, says Korshunov, will probably resemble the relationship between citizens and the media in his native Russia. “Because of all the propaganda and misinformation, they don’t trust anything,” he says.

In such an environment, a lack of corroborating information means that the veracity of a piece of audio or video becomes a matter of choice, one that ends up being informed by our personal biases. The internet accelerated this trend, says Korshunov. The radical on the fringe can easily find a friend. “Now he’s self-validated,” argues Korshunov. “He’s not crazy, actually – everyone else is crazy.” And soon he’ll have the footage to prove it.