Ten European software industry associations have called on the EU to scrap plans to include the regulation of general-purpose AI including natural language processing and chatbots in its new AI Act, describing it as a “fundamental departure from its original objective” and saying that it could stifle innovation and hit the open source community.

The European Union AI Act aims to establish a framework to regulate the use of artificial intelligence, taking a “risk-based” approach to its use and establish a worldwide standard. The Act includes core provisions including tighter regulations in high-risk areas such as healthcare and transparency requirements, focusing on specific-purpose narrow AI.

However, the group of industry associations, led by BSA, the software alliance, has published a joint statement urging EU institutions to reject recent additions to the Act that include regulation of general purpose AI and instead “maintain a risk-based approach”.

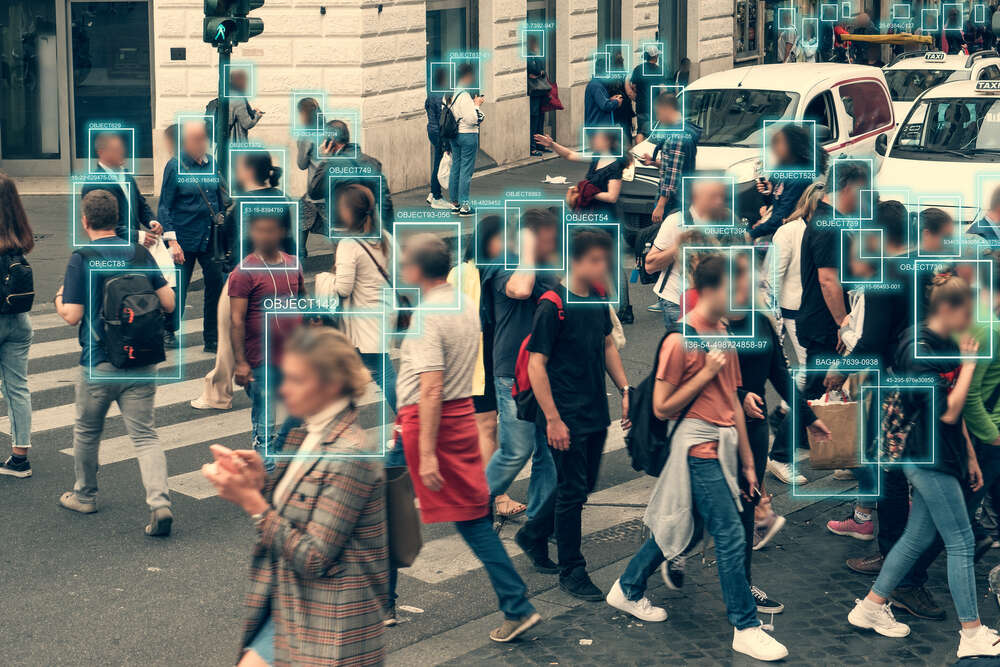

The objective of general purpose AI is to create machines that can reason and think like a human. It has no set or clear path or pattern to follow and includes chatbots using natural language processing and driverless vehicle systems.

Fundamental departure from objective

In May the French presidency of the Council of the EU announced plans to include this in the scope of the Act, requiring providers to comply with risk management, data governance, transparency and security articles of the Act, where previously it was excluded.

“This inclusion would be a fundamental departure from the original structure of the AI Act, by including non-high-risk AI in the scope of the regulation, against all objectives set out by the European Commission,” the group behind the statement wrote.

The ten associations from across the EU call on policymakers to ensure the AI Act maintains a risk-based approach to regulation, excluding general purpose AI.

“The businesses we represent have been supportive of the objectives of the AI Act, in particular the goal to create a balanced and proportionate regulatory approach,” the statement says.

The associations claim that the inclusion of general purpose AI would overturn this risk-based approach and “severely impact open-source development in Europe” as well as “undermine AI uptake, innovation, and digital transformation”.

“For these reasons, we strongly urge EU institutions to reject the recent proposals on general purpose AI and ensure that the scope of the AI Act maintains its risk-based approach and a balanced allocation of responsibilities for the AI value chain, for a framework that protects fundamental rights, while addressing the challenges posed by high-risk AI use-cases and supports innovation.”

By including general AI, the group say the Act would subject low-risk AI systems to heavy scrutiny, simply because there is no clear definition of how they will be used.

“As a result, the AI Act would no longer regulate specific high-risk scenarios, but a whole technology regardless of its risk classification,” the group wrote in a paper on the issue.

It cites the example of a developer that has designed a general purpose AI to read documents ranging from university transcripts to government forms and extract relevant information for a specific use. The system is designed to be further customised and the developer would have to comply with a pre-emptive risk assessment of a diverse and different range of sectors and future use cases.

That same developer would also have to continuously monitor the operation of the tool, creating possible GDPR conflicts as it could also be used to extract personal data.

Unforeseen outcomes of changes

The changes to the Act would “run counter to the commission’s own assessment—and that of stakeholders who provided comments on the AI Act—on which option for legislation would better serve the EU for supporting the development of trustworthy AI,” the statement continues.

What’s more, the definition of AI in the amended legislation includes some software products not traditionally considered AI, according to the group, including APIs and tools used to develop AI systems.

“One thing the AI Act would benefit from is more clarity on the allocation of responsibilities between AI developers and deployers, ensuring that compliance obligations are assigned to the entities best placed to mitigate challenges and concerns and encouraging coordination along the AI value chain,” Matteo Quattrocchi, BSA policy director, told Tech Monitor.

However, not everyone agrees with the group’s assessment. Adam Leon Smith from Dragonfly, a UK industry representative to the EU AI standards group, said general purpose systems need to be included in the legislation due to potential unintended consequences.

“Such components can be the cause of the harm the AI Act is intended to prevent,” Leon Smith said. “In fact, the risk is arguably greater if the AI component is being used in a way that the developers did not envisage.

“The latest draft proposals include a get-out clause for developers, they simply need to include a usage limitation in the instructions for use. Frankly, it would be unusual to download open source software without legal documentation already being included – so this is an extra line or two in there – not a huge burden upon developers.”