It was shaping up to be another dry hearing at the Senate Judiciary Committee for Joe Biden. It was March 1986, and the senator from Delaware was about ready to finish questioning Treasury Department official David D Queen about a backlog of data used to detect money laundering. “Are there any red lights that go on in the computer bank?” asked Biden.

“It is not like the Maytag repairman sort of sitting there, lonely, wondering when the next call will come in,” Queen replied, sardonically. “We are in the process of spending no small sum of effort and money in the area of artificial intelligence, with the very legitimate belief that… ”

“Artificial intelligence?” Biden interrupted.

AI, replied Queen, “is the ability of a computer program to actually, perpetually, analyse the data and match things that would not, to the ordinary poor soul, click as a significant pattern.”

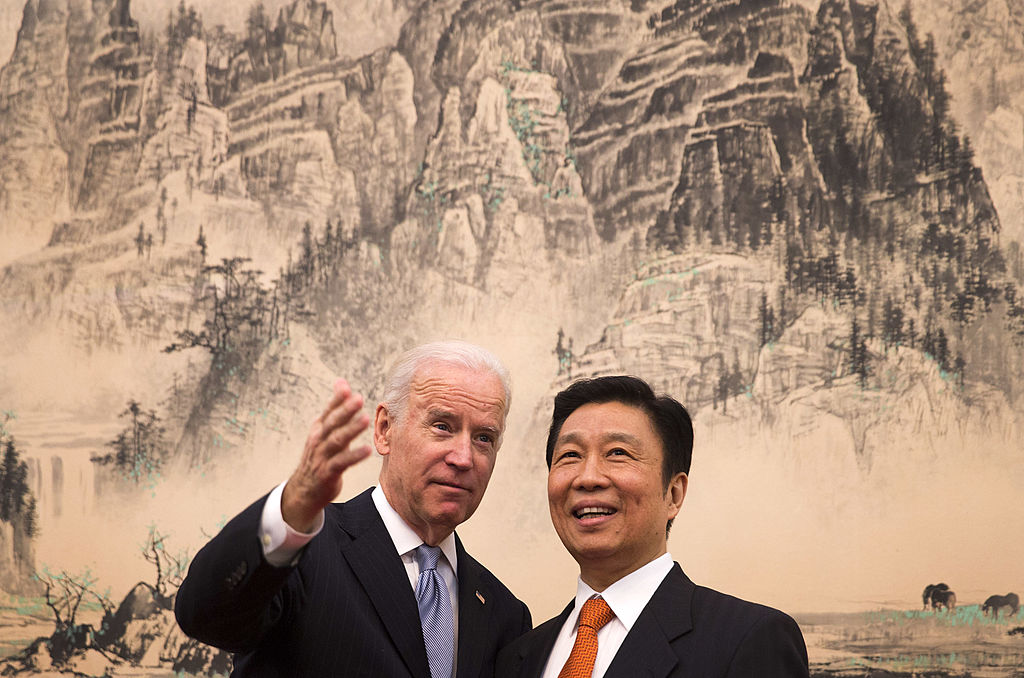

This episode was Joe Biden’s first public introduction to the rudiments of AI. Much has changed since 1986. Biden was elected vice-president, then president. AI fell out of favour in technology circles, meanwhile, only to return with a vengeance in the past decade.

Today, it is woven into the daily lives of American citizens, provoking new anxieties about how obscure algorithms govern everything from access to healthcare, to performance in job interviews, and law enforcement. And, as an era-defining technology, AI is a crucial battlefront in the competition with China for global technological supremacy. As a result, Joe Biden’s approach to AI over the next four years is highly anticipated.

Trump’s light touch

Robert D Atkinson, president of the Information Technology and Innovation Foundation (ITIF), attributes this stance to White House chief technology officer Michael Kratsios. “At least in AI, they were a little more forthcoming, or interventionist, if you will,” says Atkinson.

Trump’s otherwise laissez-faire approach to AI helped to preserve the US lead in AI innovation over China and the EU, Atkinson argues. Some Trump administration officials pushed for public investment in AI research, but the White House’s promise to double investment in civilian AI research to $2bn a year was criticised as being insufficient and came at the cost of major budget cuts to almost every other area of science.

The incoming Biden administration, by contrast, has promised to boost investment in federal R&D programmes to $142bn – of which AI will undoubtedly prove to be a crucial component. This is in addition to legislative efforts to increase R&D investment. “There’s a bill in the Congress called the ‘Endless Frontier Act‘, which [Senator Chuck] Schumer was the lead on,” says Atkinson. Although it failed to receive a vote in the previous Congress, it is more likely to pass in the newly Democrat-controlled Senate. “It’s an important bill, because it’s $100bn in R&D in around ten areas, including AI.”

Algorithmic oversight

The area in which Biden will diverge most from his predecessor, Atkinson believes, will be the regulation of AI. “I think you’re going to see the Biden administration being more willing and more anxious to impose more stringent regulations on AI, particularly around purported bias, whether it’s gender, or race, or other issues,” he says.

The dangers of algorithmic discrimination became widely publicised during the Trump presidency, following high-profile incidents that included bias against African-Americans in healthcare algorithms and evidence that facial recognition software – which has seen an uptick in adoption by US law enforcement agencies – is systemically racist and liable to result in wrongful convictions.

Vice-President-elect Kamala Harris directly addressed algorithmic discrimination in her presidential campaign, and the cause has been championed by the newly announced appointee to the White House’s Office for Science and Technology Policy (OSTP), Alondra Nelson. A sociology professor at the Institute for Advanced Study at Princeton University, Nelson has been outspoken on the detrimental impact AI can have on the lives of minorities. She has suggested that more proactive regulation may prove necessary in addressing racial bias in these software platforms.

“When we provide inputs to the algorithm… we are making human choices,” Nelson said during the press conference announcing her appointment. “That’s why, in my career, I’ve always sought to understand the perspective of people and communities who are not usually in the room when the inputs are made, but who live with the outputs nonetheless. As a black woman researcher, I am keenly aware of those who are missing from these rooms.”

According to Alex Engler, a Rubinstein Fellow in artificial intelligence and governance at the Brookings Institution, the federal government needs to take a stand against algorithmic bias. Nelson’s appointment is just the first step. “If you have sensible regulations that punish lazy and bad actors, people who are building half-assed AI systems that maybe do something that sounds fancy but are not robust or discriminatory, or have all sorts of unintended consequences, you can do that right now and get away with it,” he says.

Atkinson agrees that algorithmic discrimination should be illegal but questions its ubiquity. “There’s no way the federal government should be allowing the deployment of racially or gender-biased AI facial recognition systems,” he says. “But to say that that’s inherent is just simply wrong.”

Failure to acknowledge that non-discriminatory AI systems are not only possible but exist, he argues, could lead to regulatory overreach and a commensurate chilling of AI innovation in the US. “I think there’s a risk of having to do some sort of algorithmic approval systems, the way the European Commission is going,” he says, although he adds that this is unlikely without formal legislation.

Engler, for his part, believes that a more proactive approach to AI oversight is necessary. He not only recommends revising government guidance for facial recognition systems but also argues that federal agencies should be given the power to subpoena corporate datasets. The administration should also ban ‘facial affect analysis’ software, Engler argues, a technology that is increasingly being used in job interviews – and one that has been criticised for its potential to retrench existing inequalities in the job market.

Regulation in this vein, he believes, would spur innovation, not smother it. “If it’s harder to make a whole bunch of money as a snake oil company, there’ll be fewer of them,” says Engler. “The more responsible companies will pick up that market share, and everybody wins.”

China and Biden’s AI policy

While the extent to which the Biden administration can and will tighten AI oversight in the US remains unclear, it is certain to be the subject of active public discussion in the years to come – not least because the vibrancy of America’s innovation in AI has come to symbolise its standing in the world.

The growing rivalry between Beijing and Washington will be another major determinant of the rules governing the use of AI in the coming years, says Atkinson. “The Biden administration will be seeing AI as a strategic, critical technology that we have to be able to ‘win’.”

This, and the domestic pressure to contain the risks of ungoverned AI use, will be the two agendas that shape AI policy in the Biden era.