Two teams of AI agents tasked with playing a game (or million) of hide and seek in a virtual environment developed complex strategies and counterstrategies – and exploited holes in their environment that even its creators didn’t even know that it had.

The game was part of an experiment by OpenAI designed to test the AI skills that emerge from multi-agent competition and standard reinforcement learning algorithms at scale. OpenAI described the outcome in a striking paper published this week.

The organisation, now heavily backed by Microsoft, described the outcome as further proof that “skills, far more complex than the seed game dynamics and environment, can emerge” (from such experiments/training exercises).

Some of its findings are neatly captured in the video below.

In a blog post, Emergent Tool Use from Multi-agent Interaction, OpenAI noted: “These results inspire confidence that in a more open-ended and diverse environment, multi-agent dynamics could lead to extremely complex and human-relevant behavior.

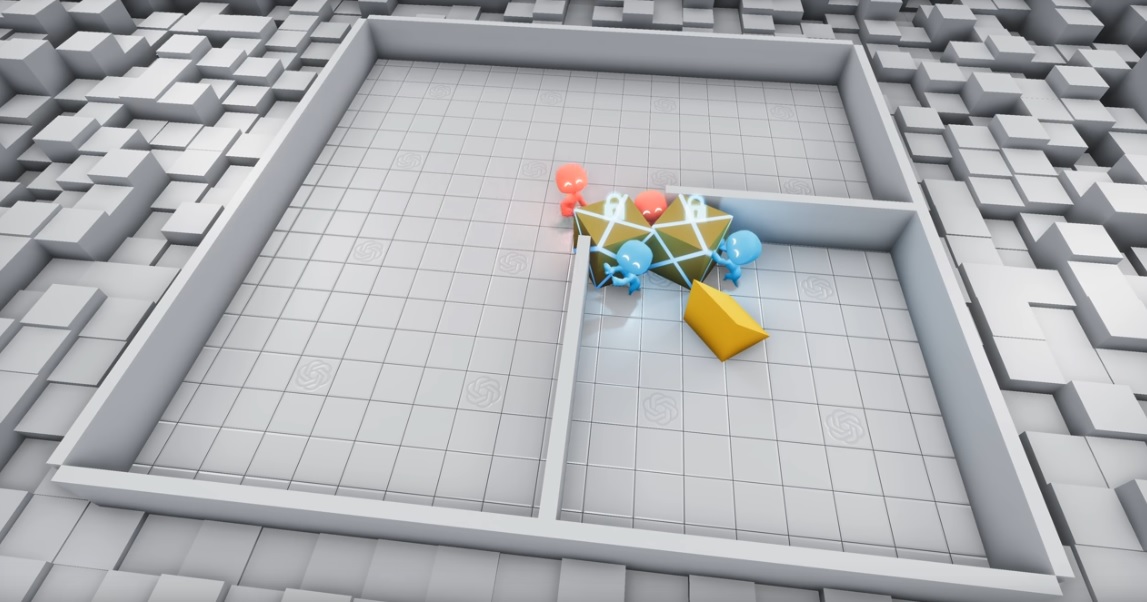

The AI hide and seek experiment, which pitted a team of finders against a team of seekers, made use of two core techniques in AI: multi-agent learning, which uses multiple algorithms in competition or coordination, and reinforcement learning; a form of programming that uses reward and punishment techniques to train algorithms.

In the game of AI hide and seek, the two opposing teams of AI agents created a range of complex hiding and seeking strategies – compellingly illustrated in a series of videos by OpenAI – that involved collaboration, tool use, and some creative pushing at the bounderies of the virtual parameters the world creators thought they’d set.

And seekers learn that if they run at a wall with a ramp at the right angle, they can launch themselves upward. pic.twitter.com/SJv9SzctEp

— OpenAI (@OpenAI) September 17, 2019

“Another method to learn skills in an unsupervised manner is intrinsic motivation, which incentivizes agents to explore with various metrics such as model error or state counts,” OpenAI’s researchers

“We ran count-based exploration in our environment, in which agents keep an explicit count of states they’ve visited and are incentivized to go to infrequently visited states”, they added, detailing the outcomes which included the bots removing some of the tools their opponents were given entirely from the game space, and launching themselves into the air for a birds-eye view of their hiding opponent.

As they concluded: “Building environments is not easy and it is quite often the case that agents find a way to exploit the environment you build… in an unintended way”.