Changes need to be made to the UK’s copyright legislation to take into account the impact of generative AI content and to protect the creative industries, representatives from UK music and theatre told MPs today.

The laws surrounding artificial intelligence are still in development, and regulators are struggling to keep pace with the rapid expansion and advancement of foundation, large language and generative AI models like ChatGPT or Midjourney. To better understand the impact these systems will have on businesses, parliament’s Science, Innovation and Technology select committee has been holding hearings on the subject.

At an evidence session earlier today Paul Fleming, general secretary of actors union Equity, warned that AI was already taking jobs from creatives, particularly voice artists. He told members of the committee he does not believe this to be a “doomsday scenario”, but says there is a need to ensure contracts, legislation and frameworks are in place in such a way that those whose image, voice or performance is used to train AI aren’t being taken advantage of by technology or production companies.

He gave the example of windscreen adverts on radio stations. This was once a stable part of a voice actor’s income, allowing them to then take plays or more creative performances without worrying about money. This work is now going to AI, with synthetic rather than human voices reading the script. “[The change] is disproportionately affecting those with disabilities as it presents an easy access way into the industry for disabled artists,” Fleming said.

Politicians were told the challenge is to ensure a balanced approach. One that ensures artists are fairly compensated and have control over their work, whilst not making it impossible to create new technologies in the country or stifle innovation.

Copyright law in the UK is a “principle that gives rights to creatives and rights holders to allow them to be renumerated for their work,” said Dr Hayleigh Bosher, senior lecturer in intellectual property law at Brunei University. Speaking to the politicians she added “AI companies will also benefit from updates to the patents and copyright law.”

The proposed updates to the copyright law would include adding sections to give creatives rights over their own images and voice. It would also see ratification of the Beijing treaty which includes moral rights over a performance. This is increasingly important in the field of motion capture and gaming, where specific movement might be highly identifiable.

Avoiding malicious use of AI

“I am a luddite in the true sense of the word,” said Fleming. “Our members don’t hate new technology but what they dislike is the malicious use of that technology in order to undermine their terms and conditions. Over 60% of members say AI will have an impact on their work and that rises to 90% for audio artists.”

“The copyright and patents act is older than me and needs serious updating,” he added. Explaining that a new framework for AI usage, updates to the copyright law and ratifying the Beijing treaty would “provide leverage in collective bargaining” with both major studios and game development companies which aren’t used to working in a unionised environment.

Jamie Njoku-Goodwin, CEO of trade body UK Music says there is concern from all sides of the music sector over “what happens if we get it wrong”. When asked whether this stems from the way the industry had to deal with major changes from the likes of Napster and downloading in the early 2000s he said “There are similar challenges as we had with the internet.” It is a transformational moment and “with the wrong regulatory framework it could be very bad,” Njoku-Goodwin said.

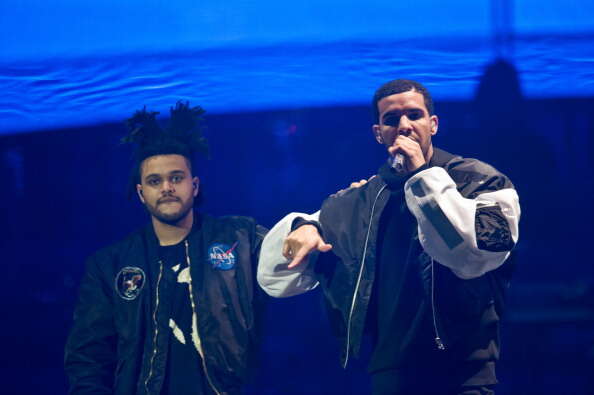

Last month it was reported AI had been used to simulate the voices of artists Drake and The Weeknd on a track which was subsequently pulled from streaming services.

Njoku-Goodwin said: “I have no issues with AI producers generating work where creators gave permission. If as a performer I’m asked and I say yes and grant a license with commercial agreement – then fine we’re not against that.” It all comes down to what goes into the model, and according to Njoku-Goodwin, that is where it should be regulated.

As well as supporting calls for changes to copyright legislation, he says any AI regulation should require model developers to keep a database of all content used to train the model. This should be properly labeled and accessible in case of a copyright claim.

He says it could even make copyright disputes easier. “Right now lawyers argue whether someone heard a song first and whether the intent was to copy it,£ the UK Music chief said. “This can be a very subjective process. With AI it can be much easier, as AI have to be trained on content. We need a database of what AI is trained on so we can see whether it was used in a copyright claim.”

“If it is not regulating properly at input then it creates whole load of issues at the output stage, particularly over legality of content,” said Njoku-Goodwin. The aim, he said, is to work with the AI sector to create licensing solutions that respect copyright and the rights of artists.

MPs were told that its important the principles of intellectual property are embedded into the artificial intelligence tools from the start. This would put the say in how their content is used purely in the hands of those that created it.