The case of Nijeer Parks symbolises all that is wrong with artificial intelligence in America. Parks, a 31-year-old black man, was arrested in February 2019 by New Jersey police on suspicion of shoplifting in a hotel then attempting to hit an officer with his car. He was the primary suspect after having been identified using specialist facial recognition software. This application, however, could not explain why Parks was 30 miles away from the hotel when the incident took place.

As the New York Times later explained, Parks was the third case of a black male being arrested after being incorrectly identified by facial recognition software. For many AI research scientists, it was a classic case of algorithmic discrimination – a phenomenon that could only be prevented through thoughtful regulatory changes from the federal government. “It is imperative that lawmakers act to protect fundamental rights and liberties and ensure that these powerful technologies do not exacerbate inequality,” said Meredith Whittaker, co-founder of the AI Now Institute at New York University, in testimony to Congress last year.

There is a clear and demonstrated change in the tone and approach of the [Biden] administration towards AI harms.

Alex Engler, Brookings Institution

The only way to do that, Whittaker continued, was to institute stringent limits on technologies such as facial recognition. Others, meanwhile, argued for a fundamental rethink on how the data for such applications is collected, with a newfound focus on reducing harmful racial and societal biases. Few of these critics dared hope that the federal government would act on these concerns, given the Trump administration’s laissez-faire attitude to AI harms. The election of Democrat Joe Biden to the presidency last year, however, changed that.

“There is a clear and demonstrated change in the tone and approach of the administration towards AI harms” compared to its Republican predecessor, says Alex Engler, a research fellow at the Brookings Institution. Indeed, the Biden administration has been explicitly supportive of using the power of the federal government to mitigate algorithmic discrimination and other AI-induced harms. What’s more, it has appointed some of the most vocal proponents of this approach to the White House Office of Science & Technology Policy (OSTP), including professors Alondra Nelson, Rashida Richardson and Suresh Venkatasubramanian.

“These aren’t people with under-the-radar views,” explains Engler. “These are progressive, activist people looking for a more pronounced role of government in curtailing some of the worst harms” propagated by AI systems. It’s a cohort that’s receptive to new ideas, says Engler, who recently attended a consultation organised by the OSTP on the use of biometrics and AI by the federal government. “You got a clear sense that they were listening,” he says.

Soon, they’ll be taking action. If 2021 has seen the Biden administration in listening mode on AI, next year is likely to see a push for regulatory reforms and new programs intended to fundamentally change the interactions between ordinary Americans and the algorithms that increasingly govern so much of their lives.

Time, however, may be running out. Equipped with only wafer-thin majorities in the House and Senate, the Biden administration faces the prospect of losing those altogether in next year’s midterm elections. Absent the ability to pass legislation, all that is left is for the White House to lead by example on AI – and hope this convinces the public to embrace its policy agenda in the field.

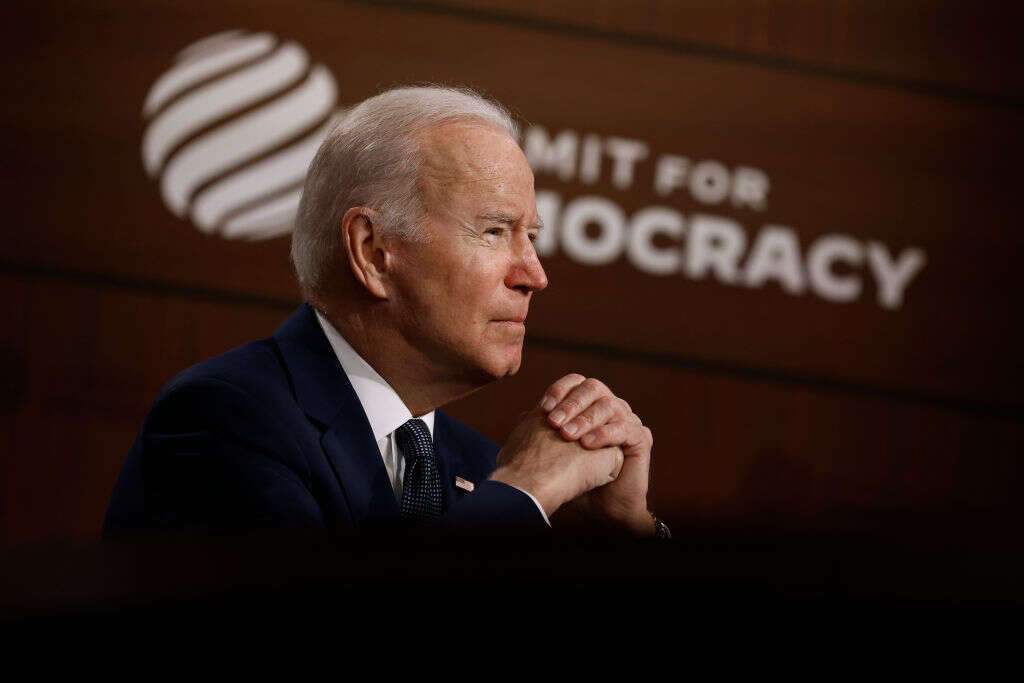

The Biden administration may need to pursue non-legislative approaches to containing the dangers of AI. (Photo by Chip Somodevilla/Getty Images)

Promotion and regulation

Specific measures from the Biden administration to address algorithmic discrimination have so far been thin on the ground. One major initiative to emerge in the past year has been what the OSTP has tentatively named the ‘AI Bill of Rights’. This new set of rules would lay down principles for algorithmic applications designed to prevent instances of outright discrimination.

‘What exactly those are will require discussion,’ wrote Alondra Nelson and White House science advisor, Eric Lander, in an op-ed for Wired, before mooting several possibilities. These include the right for US citizens to know ‘when and how AI is influencing a decision that affects [their] civil rights and liberties,’ as well as ensuring that individuals are not subjected to applications that aren’t rigorously tested or inflict ‘pervasive or discriminatory surveillance and monitoring in your home, community and workplace,’ and creating avenues for recourse when they do.

Calls for a national ‘AI Bill of Rights’ coincide with moves to mitigate algorithmic discrimination at the state level, and some have questioned whether such laws need to be passed by the federal government. ‘We already have laws to address exactly the kinds of flaws that Lander and Nelson find with AI,’ one critic recently wrote in the National Law Review.

Whether such laws could prevent cases like that of Nijeer Parks is the subject of debate. An alternative approach is to address who has access to AI development resources, such as compute infrastructure and training data, so that the resulting algorithms are more reflective of society at large. That’s the idea behind the National AI Research Resource (NAIRR), the result of a bipartisan bill passed in the final year of the Trump administration. For the past few months, a task force appointed by President Biden has been busy discussing the precise parameters of the NAIRR, as well as who will have the right to access its outputs.

“At the heart of this – and I think one of the reasons I was invited to the panel – is the problem of a lack of computational power for academics,” explains Dr Oren Etzioni, CEO of the Allen Institute for Artificial Intelligence and a member of the NAIRR task force. Most of those computing resources are under the control of the Big Tech companies, a pattern which some have criticised as leading to a lack of public accountability in the way that AI algorithms are written and implemented. Instead, NAIRR aims to create a new resource that promotes ‘fair, trustworthy, and responsible AI’ without compromising the privacy or civil rights of US citizens.

At its latest meeting, the task force also discussed how ‘facilitate impact assessments and promote accountability mechanisms for emerging AI systems.’ Some, however, fear that the resource will end up rewarding those Big Tech firms that promoted opacity and inequity in AI systems in the first place. In November, the AI Now Institute and the Data & Society Research Institute jointly warned the task force against collaborating with Amazon and Microsoft to access its cloud computing resources. “What we’re looking at,” said Whittaker, “is a large subsidy going directly to Big Tech in a way that will extend and entrench its power,” just as its role in propagating AI harms is being questioned by Congress.

Etzioni agrees that the NAIRR shouldn’t inadvertently contribute toward big tech’s consolidation of AI research, much less reward bad actors. However, he believes that while it might give him pause if delivering the resource meant enriching “the makers of AK-47s or land mines or big tobacco,” he doesn’t feel there’s a moral equivalence to be had between those industries and the likes of AWS or Microsoft. Ruling out such an alliance would also drastically reduce the opportunities to scale-up AI research in the way the task force envisions, says Engler. Cutting-edge AI research involves “cloud machines that are using their own specialised versions of semiconductors specifically for the tensor functions of deep learning,” he says. As such, “I’m not sure that hypocrisy is a good enough reason not to” call on Big Tech for help.

Tackling AI harms with ‘soft law’

The question remains, however, whether the Biden administration will have the means and time to implement the NAIRR. While the task force is scheduled to deliver its recommendations to the president and Congress early next year, additional legislation will be required to implement them. It remains unclear, however, whether it’ll pass with bipartisan support. Etzioni remains quietly confident. “I think there’s a very high likelihood of that,” he says.

If not, there are other measures that the Biden administration can take besides legislation. Agencies of the federal government enjoy significant latitude in setting rules and regulations within their departmental purview, says Adam Thierer of the Mercatus Institute. In most cases, their focus is fairly narrow: the Department of Transportation, for example, has concerned itself mainly with setting the guardrails for driverless cars and commercial drones. This is not the case for the Federal Trade Commission (FTC), however, which has wide authority to set rules on consumer protections. And new chairperson Lina Khan has been outspoken in the past on the need for greater regulatory scrutiny of Big Tech.

The Federal Trade Commission could become the first point of contact with AI regulation in the United States.

Adam Thierer, Mercatus Institute

“The Federal Trade Commission could become the first point of contact with AI regulation in the United States,” says Thierer, citing the agency’s statement of concern about biased AI applications in April as well as its recent guidance on AI-assisted health and cybersecurity applications. As such, Thierer can imagine a scenario where the Biden administration delegates a significant portion of rulemaking on commercial AI applications to the FTC, “as opposed to having a big single overarching bill.”

In that respect, the Biden administration’s reforms on AI may not be as sweeping as initially assumed. “Scholars refer to it as ‘soft law,’” explains Thierer, an approach to AI governance that’s much more iterative and focused on setting guidelines, organising consultation sessions and making pronouncements of concern than setting hard and fast rules. This has already been happening for driverless cars, says Thierer.

“We don’t have a federal law and a federal regulatory approach” for autonomous vehicles, he explains. “But what we do have is multiple sets of agency guidance coming from the US Department of Transportation that outline a series of best practices for developers to follow when considering new autonomous vehicles.”

This ‘soft law’ approach would be a practical way for the Biden administration to pursue its goals on AI, especially as its attention is increasingly absorbed by other issues, including the economic recovery from the pandemic and international affairs. Not that these areas are mutually exclusive, as far as the White House is concerned. After all, greater investment in basic and applied AI research has been cited by the administration as fuel for a US economy recovering from the ravages of Covid-19 and another way to compete effectively with China.

A ‘soft law’ approach would be vulnerable to reversal, however. When Republicans assumed control of the executive branch in 2017, a whole host of regulations passed by the previous administration were rolled back across the federal government in the name of loosening constraints on business. One can easily see the same happening after the next Democratic presidential defeat. In that case, to preserve its legacy on AI, the Biden administration will need to use policymaking powers to significantly shift public attitudes toward reform.