Do you think that the police should be allowed to use facial recognition technology? It’s a question I’m asked almost every day as the UK’s biometrics and surveillance camera commissioner.

Consider the case of Frank R. James. In April, James set off smoke bombs on a crowded subway carriage in Brooklyn, New York, before shooting ten commuters and disappearing in the ensuing chaos. Police soon ascertained his identity, however, by finding the key to his rental van close to the crime scene, along with a 9mm semi-automatic handgun, fireworks, undetonated smoke grenades and a hatchet. The subsequent manhunt for James involved hundreds of officers from multiple federal and state law enforcement agencies. It lasted 29 hours.

Freeze frame at one hour after the incident and, in many ways, you have an exemplary justification for the use of live facial recognition in law enforcement: a terrorist attack in a densely populated city, taking place on a transport system equipped with extensive surveillance camera systems, an identified suspect and available images of what he looks like. He’s armed, he’s fired 33 rounds into a crowded carriage and detonated multiple devices – and he’s missing.

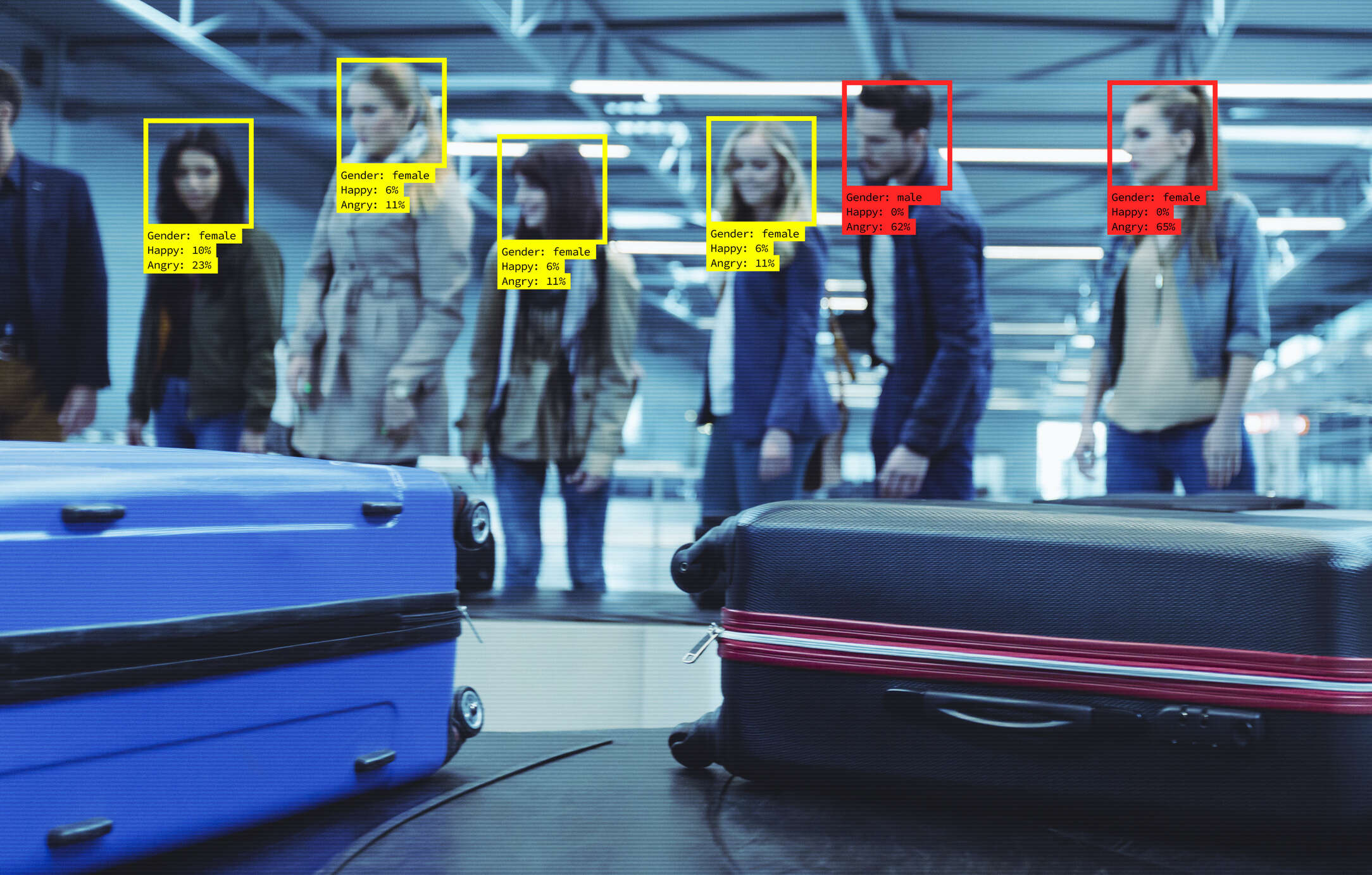

If, at this moment, the police had the technical capability to feed James’ image into the combined surveillance systems in the area and ‘instruct’ the cameras to look for their suspect, on what basis could they responsibly refuse to do so? That’s the ‘if’ that we have to recognise now, as the capabilities of live facial recognition continue to mature. However, the parameters of where, when, how and by whom the technology can be used in less extreme cases remain undefined.

In the case of James, it doesn’t appear that such a level of surveillance capability was available. Instead, law enforcement agencies named the suspect and released his picture, urging the public to keep sending them footage from the crime scene and elsewhere as they pieced together his movements. This response and the reliance on the citizen’s technology – and their willingness to share it – are also a critical feature of how police surveillance of public space has shifted. Here’s why.

The surveillance relationship

Public space surveillance by the police in England and Wales is governed largely by the Surveillance Camera Code of Practice. But the practice has moved on from the world initially envisaged by the Code’s drafters, a world in which the police needed images of the citizen to one where they also need images from the citizen. Following any incident, many police forces now regularly make public requests for images that might have been captured on personal devices.

Not all of this interaction between the public and the police is benign, or predictable. Often, the citizen is also capturing images of the police themselves: the faces of many law enforcement personnel who visited the Brooklyn crime scene, for example, were broadcast worldwide on global news channels. As people now have access to surveillance tools that only a decade ago were restricted to state agencies, the risks of facial recognition technology being used to frustrate vital aspects of our criminal justice system such as witness protection, victim relocation and covert operations are obvious – an aspect that has received comparatively little attention in the many debates on the subject.

Some might say that, if a city were to synthesise its overall surveillance capability across its transport network, street cameras, traffic and dash cams, body-worn devices and employees’ smartphones, this would amount to the same thing as asking citizens to send in their images – albeit in a far more efficient, effective and less randomly intrusive way. Arguably it is, but in order to arrive at the freeze-framed moment above, a city would first need to develop a fully integrated public space surveillance system equipped with facial recognition technology, sound and voice analytics, vehicle licence plate readers and a host of other features invisible to the naked eye.

Once installed, the capabilities of such an integrated surveillance system would stretch far beyond detecting suspected terrorists as they flee from the scene of an attack. It would, for example, be spectacularly efficient at ticketing barriers, only letting through those passengers known to have bought a ticket or travel card. It would be unsurpassable in its ability to find people wanted for other crimes – from sex offenders and speeding motorists, to illegal immigrants or former prisoners who have breached their licence conditions. But would the use of intrusive surveillance be justified in suppressing all of these types of criminal activity? If not, which ones, and who would decide?

Possible, permissible, acceptable

Even if such an integrated surveillance system remained unbuilt, similar questions need to be asked about the use of facial recognition algorithms by the police and other public institutions. Not only must the technological capabilities of such systems be scrutinised, but also the legal justification and societal expectations behind their use.

Valid questions persist, for instance, about the accuracy of facial recognition algorithms in identifying faces in a crowd, especially those belonging to non-white individuals. One must also consider the scale at which such a system is intended to operate: how many millions of faces, for example, is it proportionate to scan in order to train it to find a person who failed to appear at court for being drunk and disorderly?

Then, of course, there’s the issue of education. A basic understanding of the mechanics behind the technology by the public is essential if law enforcement agencies wish to obtain the backing of society for its widespread deployment. However, it remains unclear how many people actually understand that facial recognition algorithms require ‘training’ from thousands, perhaps millions, of image examples. Citizens would also want to know what the threshold for the use of facial recognition technology actually is: whether, in fact, it is just used to track down those suspected of perpetrating serious crimes, or stretches as far as spotting individuals not complying with Covid-19 regulations.

We also need to trust our technology partners. Most of the UK’s biometric surveillance capability, after all, is supplied by private companies – chiefly, Hikvision and Dahua. That these two companies have recently been accused of aiding in the persecution of Uyghur Muslims in Xinjiang province by the Chinese government (allegations both firms deny) underscores the need for ethical standards to be integrated into the public procurement of not only facial recognition technology by government, but CCTV cameras generally. Police and government bodies are unlikely to win the trust and confidence of the public to use such systems when the make and model of cameras in our schools, hospitals and public places are the same as those ringing the fences of concentration camps.

Channels for holding government and law enforcement agencies accountable for the use of this technology are also essential. This includes clearly setting out guidelines on the minimum standards of the use of facial recognition algorithms in schools, police stations and other publicly-run buildings, the level of accreditation – if any – required by companies for their use in commercial spaces, and how members of the public can register complaints should they feel that their image has been collected inappropriately.

In a technology-driven world where decisions are increasingly likely to be automated, the need to provide clear oversight and accountability is clear. Otherwise, to paraphrase Hannah Arendt, we’ll have surveillance tyranny without a tyrant.

The surveillance question of our times

Last month I was pleased to be invited to speak at the launch of the Ada Lovelace Institute’s three-year research into the challenges of biometric technology. The Ryder Review considers the legal and societal landscape against which future policy discussions about the use of biometrics will take place and the extent to which the current distinctions between established regulated biometrics (fingerprints and DNA) and others, including facial recognition, adequately reflect both risk and opportunity.

The event noted that, while it’s more than a decade since the government abandoned the concept of compulsory ID cards, we are nonetheless witnessing a change from the standard police surveillance model of humans looking for other humans to an automated, industrialised process – a moment that has been compared by some to the move from line fishing to deep ocean trawling. In that context, we should recognise concerns that we may be stopped on our streets, in transport hubs, outside arenas or school grounds on the basis of AI-generated selection and required to prove our identity to the satisfaction of the examining officer or of the algorithm itself. The ramifications of AI-driven facial recognition in policing and law enforcement are both profound enough to be taken seriously and close enough to require our immediate attention.

Following the arrest of the suspected Brooklyn attacker, Keechant Sewell, the New York City police commissioner said, “We were able to shrink his world quickly, so he had nowhere left to turn.”

Facial recognition technology will dramatically increase the speed at which the police can shrink the world of a terror suspect on the run in the future. How far it should be allowed to shrink everyone else’s world in doing so is the surveillance question of our times – one that, so far, has not been answered.